IQ-3

Content Navigation

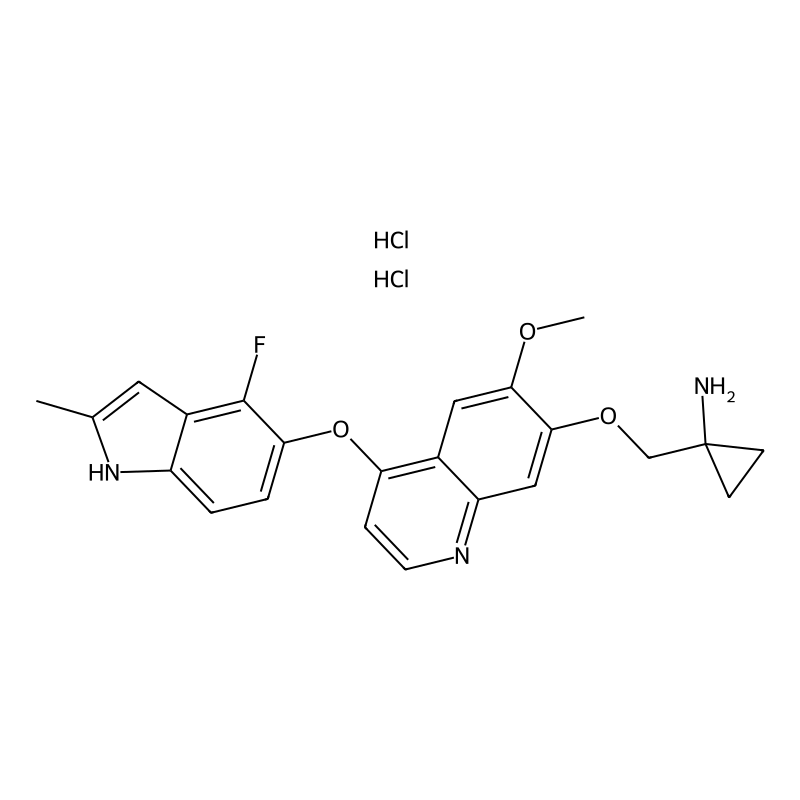

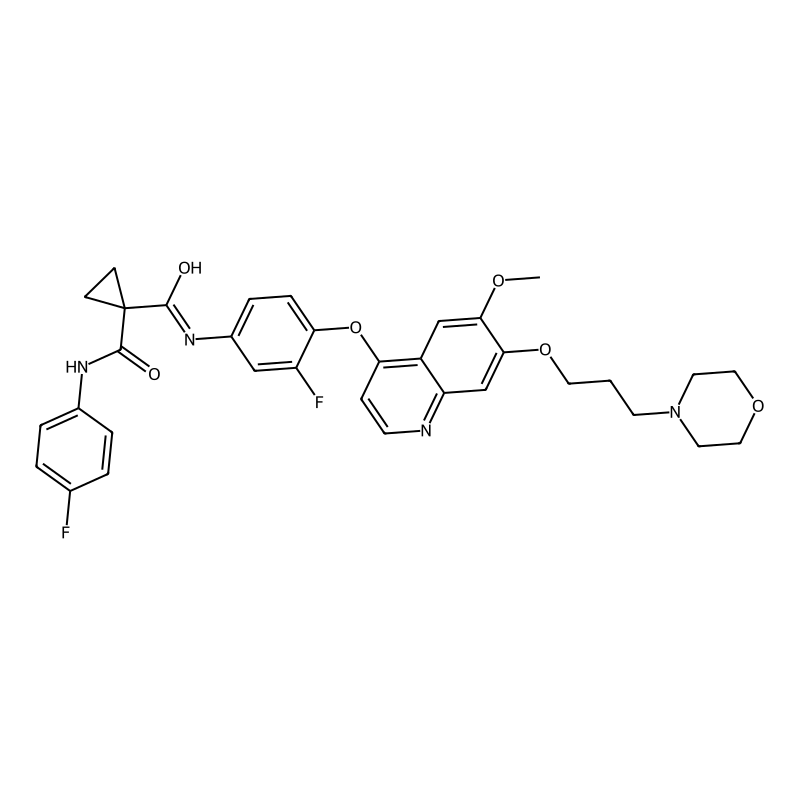

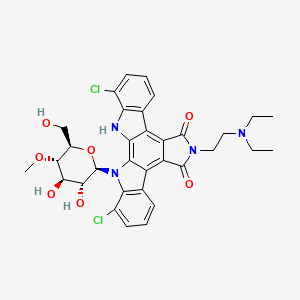

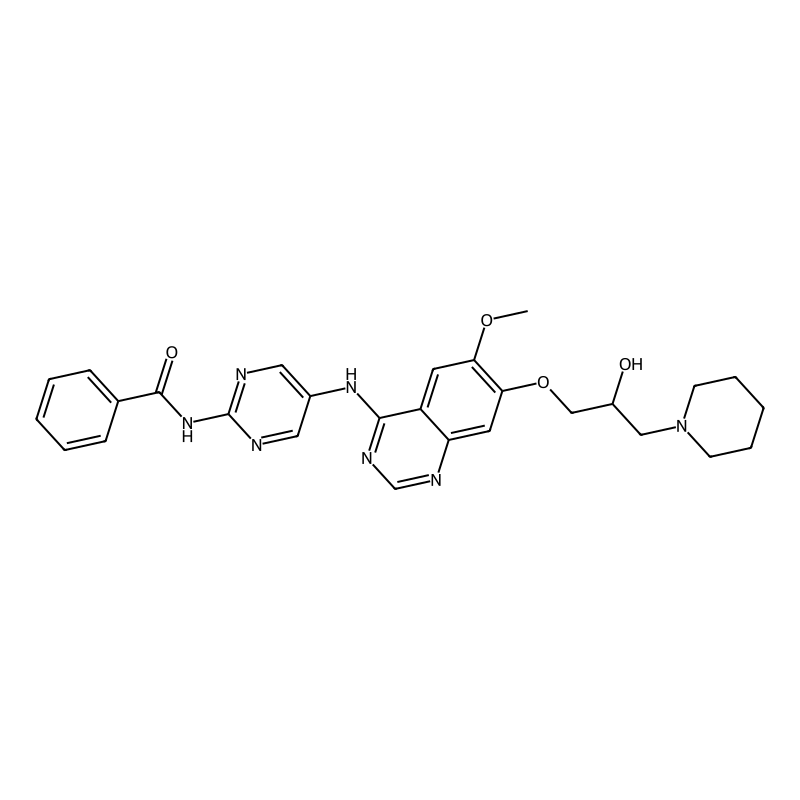

Product Name

IUPAC Name

Molecular Formula

Molecular Weight

InChI

InChI Key

SMILES

Canonical SMILES

IQGAP3 in Hedgehog and Wnt Signaling Pathways

The table below summarizes the core findings from two key studies on IQGAP3's mechanisms:

| Aspect | Study 1: Hedgehog Signaling [1] | Study 2: Wnt/β-catenin Signaling [2] |

|---|---|---|

| Core Finding | Promotes cancer stemness, metastasis, and radiotherapy resistance. | Establishes a positive feedback loop to hyperactivate Wnt signaling. |

| Key Mechanism | Upregulates/activates the transcription factor GLI1, a pivotal effector of the Hedgehog pathway [1]. | Disrupts the Axin1-CK1α interaction within the β-catenin destruction complex [2]. |

| Downstream Effect | Increases expression of stemness-related genes (e.g., NANOG, OCT4) and EMT markers [1]. | Inhibits β-catenin phosphorylation, leading to its stabilization and nuclear accumulation [2]. |

| Functional Outcome | Enhanced migration, invasion, and sphere-forming capability of lung cancer cells [1]. | Increased β-catenin levels and expression of pro-proliferation genes in gastric cancer cells [2]. |

Key Experimental Protocols

Here are the methodologies used in the cited research to uncover IQGAP3's functions:

- Gene Knockdown (Loss-of-Function): IQGAP3 expression was silenced in lung cancer cell lines (A549, H1299) using specific siRNAs (e.g., siIQGAP3-1: 5’-GGGUGUGGCUGUCAUGAAA-3’). Knockdown was confirmed via western blotting and qPCR [1].

- Interactome Mapping (Proximity Labeling): To identify IQGAP3-interacting proteins, researchers used TurboID, an engineered biotin ligase fused to IQGAP3. Cells expressing TurboID-IQGAP3 were treated with biotin and doxycycline, and biotinylated proteins were pulled down with streptavidin and identified by mass spectrometry [2]. This technique identified Axin1 and CK1α as novel interaction partners.

- Mechanistic Validation:

- Co-immunoprecipitation (Co-IP): Used to confirm protein-protein interactions. For example, endogenous Co-IP in lung cancer cells demonstrated a direct interaction between IQGAP3 and GLI1 proteins [1]. In gastric cancer cells, Co-IP showed that IQGAP3 overexpression reduces the interaction between Axin1 and CK1α [2].

- Luciferase Reporter Assays: The transcriptional activity of β-catenin was measured using the TOPflash/FOPflash (pGL3-OT/OF) reporter system in cells where IQGAP3 was either overexpressed or knocked down [2].

Signaling Pathway Diagrams

The diagrams below, generated using Graphviz, illustrate the two key signaling pathways involving IQGAP3.

Diagram 1: IQGAP3 activates Hedgehog signaling and GLI1 to promote cancer stemness [1].

Diagram 2: IQGAP3 disrupts the destruction complex and creates a Wnt feedback loop [2].

Summary and Research Implications

The evidence positions IQGAP3 as a central scaffold protein and a potential therapeutic target in multiple cancers. Its ability to integrate signals from both the Hedgehog and Wnt pathways, which are crucial for cell stemness and proliferation, makes it a high-value target for disrupting cancer progression and overcoming therapy resistance [1] [2]. Future research and drug discovery efforts might focus on developing small molecules or other modalities to disrupt its specific protein interactions.

References

IQGAP3 in Gastric Cancer: Core Findings and Mechanisms

The study establishes that IQGAP3 is not merely a proliferation marker but a central hub that coordinates multiple oncogenic signaling pathways to drive tumor growth, metastasis, and the formation of a supportive tumor microenvironment (TME) [1].

Key Oncogenic Functions

The core functions of IQGAP3 in promoting gastric cancer malignancy are summarized in the table below:

| Function | Mechanism & Impact |

|---|---|

| Signal Transduction Hub | Scaffolds and enhances KRAS-ERK signaling; its inhibition blocks phosphorylation events in this pathway [1]. |

| TME and Metastasis | Knockdown reduces key growth factors (e.g., TGFβ1), leading to fewer cancer-associated fibroblasts (CAFs) and impaired metastasis in vivo [1]. |

| Intratumoral Heterogeneity | Maintains two distinct cancer cell subpopulations (Ki67-high proliferating and Ki67-low slow-cycling); depletion collapses this functional heterogeneity [1]. |

| Therapeutic Target | IQGAP3 depletion dramatically reduces tumorigenesis and lung metastasis in mouse models, highlighting its potential as a multipronged therapeutic target [1]. |

Signaling Pathways and Functional Heterogeneity

IQGAP3 acts as a central node that potentiates crosstalk between the KRAS and TGFβ signaling pathways. It also maintains a functional hierarchy within the tumor, which is essential for efficient growth.

The following diagram illustrates the core signaling pathway mediated by IQGAP3 and its downstream oncogenic effects:

IQGAP3 integrates KRAS and TGFβ signaling to drive cancer malignancy [1].

The study used digital spatial profiling to reveal how IQGAP3 maintains two functionally distinct subpopulations of cancer cells. The experimental workflow and key finding are illustrated below:

Workflow for spatial analysis of IQGAP3-mediated tumor heterogeneity [1].

Experimental Models and Quantitative Data

The research employed a range of models and techniques to validate IQGAP3's role. The characteristics of the primary gastric cancer (GC) cell lines used are summarized below:

| Cell Line | Lauren Classification | Type | Key Genetic Features | IQGAP3 Expression (in vitro) | pERK Level | pSMAD3 Level |

|---|---|---|---|---|---|---|

| AGS | Intestinal | Epithelial | KrasG12D mutation | High | High | High |

| NUGC3 | Diffuse | Epithelial | FGFR1/FGF19 amplification | High | Low | Low |

| Hs746T | Diffuse | Mesenchymal | MET mutation/amplification | Low | High | Low |

Molecularly diverse GC cell lines used for IQGAP3 functional studies [1].

Core Experimental Protocols

1. In Vitro Transcriptomic Profiling via RNA-Sequencing

- Objective: To define the global downstream molecular targets and pathways regulated by IQGAP3 in different GC contexts [1].

- Methodology:

- Cell Lines: AGS, NUGC3, and Hs746T were selected for their molecular diversity [1].

- Knockdown: IQGAP3 expression was silenced using siRNA (siIQ3) transfection [1].

- Analysis: RNA from control and siIQ3 cells was subjected to RNA-sequencing. Data was analyzed using Gene Set Enrichment Analysis (GSEA) to identify significantly altered signaling pathways [1].

- Outcome: IQGAP3 knockdown consistently led to significant downregulation of KRAS signaling across all lines. It also impaired TGFβ signaling and Epithelial-Mesenchymal Transition (EMT) in specific cell lines [1].

2. In Vivo Functional Validation

- Objective: To assess the impact of IQGAP3 on tumor growth and metastasis in a live organism [1].

- Methodology:

- Xenograft Models: Immunodeficient mice were injected subcutaneously with control or IQGAP3-knockdown GC cells (e.g., NUGC3) to monitor tumorigenesis [1].

- Metastasis Models: For lung metastasis assays, cells were likely injected intravenously (e.g., via the tail vein) and lungs were later examined for metastatic nodules [1].

- Spatial Analysis: Tumors from these models were formalin-fixed, paraffin-embedded (FFPE), and analyzed using Digital Spatial Profiling (DSP) to link IQGAP3 function to intratumoral heterogeneity [1].

- Outcome: IQGAP3 knockdown resulted in attenuated tumorigenesis and significantly reduced lung metastasis. Immunofluorescence and DSP confirmed a reduction in TGFβ/SMAD signaling, αSMA-positive stromal cells (CAFs), and loss of functional subpopulations [1].

Conclusion and Research Implications

This research positions IQGAP3 as a master regulator of gastric cancer malignancy, primarily through its role as a scaffold protein that:

- Serves as a signaling hub for KRAS-ERK and TGFβ pathways [1].

- Orchestrates the tumor microenvironment by modulating key growth factors [1].

- Sustains intratumoral heterogeneity, which is critical for robust tumor growth [1].

Targeting IQGAP3 offers a strategic approach to simultaneously disrupt multiple oncogenic processes. The findings suggest that future research and drug development efforts should focus on identifying and developing small molecules or protein-protein interaction inhibitors that can disrupt IQGAP3's scaffolding function.

References

The IQ3 Motif: A Specific Scaffold for the PI3K-Akt Pathway

The IQ3 motif is a specific sequence (amino acids 806–825) within the larger IQ domain of the scaffolding protein IQ motif-containing GTPase-activating protein 1 (IQGAP1) [1]. Its primary defined function is to act as a critical molecular platform that specifically scaffolds components of the PI3K-Akt signaling pathway.

The table below summarizes the core characteristics and research findings related to the IQ3 motif:

| Aspect | Description & Findings |

|---|---|

| Location & Structure | A 20-amino acid motif (IQ3) within the IQ domain of the IQGAP1 protein [1]. |

| Key Interactions | Binds directly to PIPKIα (Phosphatidylinositol-4-phosphate 5-kinase) and the p85α regulatory subunit of PI3K [1]. |

| Specificity | Deletion or blockade of the IQ3 motif disrupts binding to PI3K-Akt pathway components but does not affect interactions with the Ras-ERK pathway components (e.g., ERK, EGFR) [1]. |

| Functional Role | Essential for the efficient, EGF-stimulated generation of PIP3 and subsequent activation of Akt. It positions lipid kinases for concerted signaling [1]. |

| Functional Consequences | Blocking the IQ3 motif inhibits EGF-stimulated Akt activation, cell proliferation, migration, and invasion [1]. |

| Therapeutic Potential | The IQ3 motif is a promising therapeutic target for suppressing PI3K-Akt driven cancers, offering an alternative to direct kinase inhibition [1]. |

Research Context and Therapeutic Implications

The research on the IQ3 motif is situated within the broader context of targeting frequently dysregulated signaling pathways in cancer, particularly the EGFR and PI3K-Akt pathways [1].

- Scaffolding as a Targeted Strategy: Direct inhibition of kinases like EGFR and PI3K has shown limited clinical success. Targeting scaffolding proteins like IQGAP1 offers a strategy to disrupt specific downstream pathways with potentially greater selectivity [1].

- Overcoming Pathway Crosstalk: The ERK1/2 and PI3K/Akt pathways often exhibit crosstalk, and inhibition of one can lead to compensatory activation of the other, contributing to drug resistance. The specificity of the IQ3 motif for the PI3K-Akt pathway makes it a valuable target for overcoming this resistance [2] [1].

- Role in PI3K Signaling: The PI3K/Akt pathway is a crucial intracellular regulator of cell growth, survival, metabolism, and is frequently activated in cancer, making it a major therapeutic target [3] [4]. The IQGAP1 scaffold, via the IQ3 motif, assembles PI4KIIIα, PIPKIα, and PI3K to sequentially generate the lipid messenger PIP3, which recruits and activates PDK1 and Akt [1].

Key Experimental Workflow

The following diagram illustrates the logical flow and key experiments used to validate the function and specificity of the IQ3 motif in the cited research [1]:

Current Research Gaps and Future Directions

Based on the available information, here are potential areas for further investigation that align with your request for in-depth technical guidance:

- Detailed Binding Kinetics: The affinity (Kd) and stoichiometry of the IQ3 motif's interaction with PIPKIα and p85α are not provided in the results.

- In Vivo Validation: The cited studies are primarily in vitro. Efficacy and toxicity studies in animal models are a critical next step for therapeutic development.

- High-Resolution Structure: A crystal or NMR structure of the IQ3 motif bound to its partners would greatly aid in rational drug design.

- Compound Screening: No specific small-molecule or peptide inhibitors (beyond the research peptide) targeting this interaction are detailed in the search results.

References

- 1. The Specificity of EGF-Stimulated IQGAP1 Scaffold ... [nature.com]

- 2. Current and Emerging Therapies for Targeting the ERK1/2 ... [mdpi.com]

- 3. Exploring research trends and hotspots in PI3K/Akt signaling ... [pmc.ncbi.nlm.nih.gov]

- 4. Regulation of PI3K signaling in cancer metabolism and PI3K ... [tbcr.amegroups.org]

Comprehensive Application Notes and Protocols for IQ-3 Data Collection in Cognitive Research

Introduction to Data Collection Framework

Current research methodologies emphasize the importance of integrating both primary data collection (gathered directly from participants through standardized tests and experiments) and secondary data sources (existing datasets, published research, and normative databases) to establish comprehensive benchmarks. The SPIRIT 2025 statement emphasizes that complete, transparent, and accessible protocols are critical for the planning, conduct, reporting, and external review of research studies, including those investigating cognitive functions [1]. This approach ensures that intelligence assessment methodologies can be properly evaluated, replicated, and compared across studies and populations.

Data Collection Methods & Strategic Approaches

Primary vs. Secondary Data Collection

Primary Data Collection: This approach involves gathering information directly from research participants through specifically designed instruments and tasks. In intelligence research, this typically includes standardized cognitive tests, performance-based measurements, and experimental tasks that assess various cognitive domains such as working memory, executive function, and processing speed. The key advantage of primary collection lies in the researcher's control over data structure, timing, and participant identity from the initial contact. When designed effectively, primary data collection maintains unique participant identities across multiple assessment points, enabling longitudinal tracking and cohort comparisons without the common pitfalls of data fragmentation that plague traditional approaches [2].

Secondary Data Collection: This method utilizes existing datasets that were originally compiled for different purposes, such as government databases, academic research repositories, organizational records, and published normative data for standardized intelligence tests. The strategic value of secondary data lies in its ability to provide contextual benchmarks and population-level comparisons without the time and resource investments required for primary data collection. However, successful integration requires careful attention to data compatibility, measurement equivalence, and temporal alignment to ensure meaningful comparisons [2].

Mixed-Method Integration: The most robust approach to intelligence assessment combines both primary and secondary data collection within a unified framework. This integrated strategy allows researchers to enrich individual participant data with population norms and historical trends, creating a more comprehensive understanding of cognitive performance. Proper implementation requires deliberate planning of data structures and automatic alignment mechanisms to avoid the manual reconciliation processes that often consume significant research resources [2].

Data Quality Dimensions and Assessment

The AIMQ methodology (AIM Quality) provides a validated framework for assessing information quality across multiple dimensions that are particularly relevant to intelligence research. This model organizes quality attributes into four quadrants based on whether information is considered a product or service, and whether assessment occurs against formal specifications or customer expectations [3].

Table 1: Data Quality Dimensions Based on AIMQ Framework

| Quality Category | Key Dimensions | Research Application Examples |

|---|---|---|

| Intrinsic IQ | Accuracy, Objectivity, Believability, Reputation | Calibration of testing equipment, standardized administration procedures |

| Contextual IQ | Relevancy, Value-added, Timeliness, Completeness, Appropriate amount | Selection of cognitive tests specific to research questions, timing of assessments |

| Representational IQ | Interpretability, Ease of understanding, Consistent representation, Concise representation | Clear visualization of cognitive test results, consistent scoring rubrics |

| Accessibility IQ | Accessibility, Access security | Secure storage of participant data, controlled access to cognitive assessment results |

Each dimension contributes uniquely to the overall validity of intelligence assessment. For instance, intrinsic quality factors ensure that cognitive measurements are accurate and objective, while contextual quality factors guarantee that the data collected is relevant to the specific research questions being investigated. Research has demonstrated that systematic attention to these quality dimensions significantly enhances the reliability and validity of intelligence assessment outcomes in both clinical and research settings [3].

Experimental Research Protocols

Study Design and Sampling Methodology

Robust experimental protocols form the foundation of valid intelligence research. The SPIRIT 2025 guidelines emphasize the importance of comprehensive protocol documentation that clearly describes all aspects of study design, including randomization procedures, sample characteristics, and eligibility criteria [1]. A well-structured protocol should explicitly define the research objectives related to both benefits and potential harms of interventions, along with a statistical analysis plan that is finalized before data collection begins.

When investigating cognitive abilities, the participant sampling approach must ensure adequate representation of the target population. Research protocols should specify:

- Eligibility criteria that clearly define inclusion and exclusion parameters

- Sample size determination with appropriate power analysis

- Recruitment strategies that minimize selection bias

- Randomization procedures when assigning participants to experimental conditions

A recent study on learning and motor control provides an exemplary model for sampling methodology, specifying that "the sample for the experienced group will be selected from a local Pilates studio. Participants must have more than six months of practice, with more than 1 h of practice per week. The non-expert group will be composed of subjects who must not have had any Pilates practice in the last three months" [4]. This level of specificity in participant characterization ensures that proper comparisons can be made between groups with defined experience levels.

Table 2: Data Collection Methods and Their Applications in Cognitive Research

| Method Category | Specific Techniques | Primary Applications in IQ Research | Reliability Considerations |

|---|---|---|---|

| Performance Tasks | Standardized cognitive tests, Computerized assessments | Direct measurement of cognitive abilities, Processing speed assessment | Test-retest reliability, Internal consistency [5] |

| Physiological Measures | EEG, fNIRS, Eye tracking, Shear wave elastography | Neurocognitive function, Attention monitoring, Muscle-brain connection | Equipment calibration, Signal quality indices [4] |

| Behavioral Observation | Structured interviews, Systematic coding, Video analysis | Executive function assessment, Behavioral manifestation of intelligence | Inter-rater reliability, Coding scheme validation [5] |

| Self-Report Measures | Questionnaires, Surveys, Rating scales | Metacognitive awareness, Learning strategies, Subjective cognitive complaints | Internal consistency, Response bias monitoring [5] |

Standardized Administration Procedures

Standardization of assessment procedures is critical for ensuring the reliability and validity of intelligence measurements. Experimental psychology research demonstrates that "assessing individual differences necessitates the use of validated tasks or protocols that are delivered in a standardized manner" [5]. The development of robust assessment tasks requires significant investment, with estimates suggesting that proper task validation "can easily take more than a year" due to the need for multiple iterations and rigorous evaluation.

Standardized administration includes:

- Consistent testing environments with controlled distractions

- Uniform instruction protocols across all participants

- Calibrated equipment with regular maintenance checks

- Trained administrators who demonstrate competence in protocol implementation

The importance of standardization is particularly evident in cognitive tasks where subtle variations in administration can significantly impact performance outcomes. For example, in a study investigating neuromuscular responses, researchers maintained strict standardization by ensuring that "all instructors involved in these facilities will have specific training to be able to teach and supervise these two new skills (four hours)" [4]. This commitment to standardized training ensures that participant exposure to experimental conditions remains consistent across the study cohort.

Diagram 1: Participant Flow in Experimental Research Protocol. This diagram illustrates the sequential flow of participants through a standardized research design, from recruitment through data analysis, ensuring methodological rigor. Adapted from semi-randomized controlled trial methodology [4].

Quality Assurance & Validation Protocols

Data Validation and Quality Checks

Implementing systematic validation procedures is essential for maintaining data integrity throughout the collection process. These procedures include both real-time validation at the point of data entry and post-collection verification to identify inconsistencies or anomalies. As noted in best practices for data collection, "collecting data is only half the battle; ensuring its accuracy, completeness, and consistency is what creates real value" [6].

Effective validation protocols include:

- Field-level validation that enforces data format requirements at entry

- Range checks that identify values outside plausible parameters

- Consistency verification that ensures related data elements align logically

- Automated quality assurance mechanisms that clean and standardize data post-collection

In intelligence research, these validation procedures are particularly important for cognitive test data, where measurement errors can significantly impact outcome interpretations. Research indicates that "because the research design is a correlational design, it is important that the test scores be stable, a requirement called reliability" [5]. Without demonstrating adequate reliability through validation procedures, correlations between cognitive measures may be underestimated or misinterpreted.

Privacy and Ethical Compliance

Ethical data handling represents a fundamental requirement in intelligence research, particularly when collecting potentially sensitive cognitive performance information. Current best practices emphasize that "beyond simply collecting data, organizations have an ethical and legal responsibility to protect it" through obtaining informed consent and adhering to privacy regulations such as GDPR and CCPA [6]. The SPIRIT 2025 guidelines further reinforce these requirements, highlighting the need for clear documentation of data sharing policies and conflict of interest declarations [1].

Key ethical protocols include:

- Informed consent procedures that clearly explain data collection and usage

- Secure data storage with appropriate access controls

- Data anonymization techniques that protect participant identity

- Transparent documentation of data handling procedures

The integration of these ethical considerations extends beyond regulatory compliance to fundamentally strengthen research quality. When "attendees understand what data you are collecting and why, they are more likely to provide it willingly and accurately, leading to higher-quality insights" [6]. This principle applies equally to intelligence research, where participant engagement and honest effort directly impact data quality.

Bias Mitigation and Representative Sampling

Minimizing systematic bias is particularly crucial in intelligence research, where historical controversies have highlighted the potential impacts of sampling limitations on findings and interpretations. Contemporary methodologies emphasize that "collecting data is not enough; the data must accurately reflect your target audience" through representative sampling techniques that reduce systematic errors [6]. This requires careful attention to participant recruitment strategies that avoid over-reliance on convenient but non-representative samples.

Effective bias mitigation strategies include:

- Stratified sampling approaches that ensure demographic representation

- Statistical controls for confounding variables

- Blinded assessment procedures to reduce experimenter bias

- Multiple measurement methods to reduce method-specific variance

The importance of these procedures is underscored by research showing that "failing to address bias can lead to flawed strategies built on misleading information" [6]. In intelligence research, where findings often have significant social and educational implications, rigorous attention to bias mitigation represents both a methodological and ethical imperative.

Diagram 2: Data Validation and Quality Assurance Workflow. This diagram illustrates the sequential process of data validation, from initial entry through final quality certification, ensuring data integrity throughout the research lifecycle. Based on established data quality frameworks [3] [6].

Implementation Guidelines

Equipment and Technical Specifications

Precision measurement instruments form the foundation of valid intelligence assessment, requiring careful selection, calibration, and maintenance. Contemporary cognitive research utilizes increasingly sophisticated technologies, including neuroimaging equipment, eye-tracking systems, computerized testing platforms, and physiological monitoring devices. Each category of equipment requires specific technical specifications to ensure measurement validity and reliability across assessment sessions.

Implementation guidelines for equipment include:

- Regular calibration schedules with documented procedures

- Standardized operating protocols across all research sites

- Backup systems for critical data collection equipment

- Environmental controls to maintain optimal operating conditions

A study on neuromuscular learning provides a exemplary model of comprehensive equipment specification, documenting the use of "abdominal wall muscle ultrasound (AWMUS), shear wave elastography (SWE), gaze behavior (GA) assessment, electroencephalography (EEG), and video motion" [4]. This multi-method approach demonstrates how complementary technologies can provide a more comprehensive assessment of cognitive and physiological processes than single-method designs.

Personnel Training and Certification

Standardized administrator training is critical for ensuring consistent implementation of intelligence assessment protocols across research sites and throughout extended study timelines. Research demonstrates that task administration effects can significantly impact cognitive performance measures, particularly on tasks requiring precise timing, standardized instruction, and specific feedback protocols. Training programs should include both theoretical foundations and practical administration experience with competency assessments.

Key training components include:

- Protocol-specific instruction on standardized administration procedures

- Observed practice sessions with corrective feedback

- Competency certification based on predetermined criteria

- Ongoing quality assurance through periodic review

The importance of comprehensive training is highlighted in research protocols that specify "all instructors will have POLESTAR Pilates training completed between 2011 and 2015" [4]. This level of specificity in credential requirements ensures that all research personnel possess the necessary foundational knowledge to implement protocols consistently and accurately.

Troubleshooting and Protocol Adaptations

Anticipating implementation challenges represents a critical component of comprehensive research protocols, particularly in complex intelligence assessment studies involving multiple sessions, specialized equipment, or diverse participant populations. Effective troubleshooting guidelines identify common problems, provide structured solutions, and establish decision rules for protocol adaptations when necessary. The SPIRIT 2025 guidelines emphasize the importance of documenting potential protocol modifications and the circumstances under which they would be implemented [1].

Common troubleshooting categories include:

- Equipment failure contingency plans with alternative assessment options

- Participant comprehension verification and clarification protocols

- Data quality issues with real-time identification and resolution procedures

- Protocol deviation documentation and response guidelines

These troubleshooting protocols balance the need for methodological consistency with the practical reality that perfect implementation is not always achievable. By establishing predetermined adaptation criteria, researchers maintain methodological rigor while acknowledging real-world implementation challenges that might otherwise compromise data quality or participant safety.

Conclusion

The IQ-3 data collection framework presented in these application notes provides a comprehensive methodology for conducting rigorous intelligence assessment in research settings. By integrating standardized protocols, robust validation procedures, and systematic quality assurance measures, researchers can significantly enhance the reliability and validity of cognitive assessment outcomes. The structured approach emphasizing both primary data collection and secondary data integration offers a flexible yet standardized foundation adaptable to diverse research contexts and populations.

Implementation of these protocols requires meticulous attention to methodological detail, from participant recruitment through data analysis. However, the investment in comprehensive protocol development yields significant returns through enhanced data quality, improved research efficiency, and more definitive findings. As intelligence research continues to evolve, these foundational principles will support the development of increasingly sophisticated assessment methodologies while maintaining the methodological rigor necessary for meaningful scientific advancement.

References

- 1. SPIRIT 2025 statement: Updated guideline for protocols of ... [pmc.ncbi.nlm.nih.gov]

- 2. Data Collection Methods That Works [sopact.com]

- 3. AIMQ: a methodology for information quality assessment [sciencedirect.com]

- 4. Methodology and Experimental Protocol for Studying ... [mdpi.com]

- 5. Designing and evaluating tasks to measure individual ... [cognitiveresearchjournal.springeropen.com]

- 6. 10 Best Practices for Data Collection in 2025 [add-to-calendar-pro.com]

An Overview of Advanced Survey Tools and Principles

The term "IQ-3" appears in the context of two distinct software platforms: Sphinx iQ and SMART iQ. The information for both is several years old, but they highlight functionalities relevant to your audience of researchers and scientists [1] [2].

The table below summarizes the core features of Sphinx iQ as an example of an advanced survey platform:

| Feature Category | Key Capabilities |

|---|---|

| Survey Programming | AI-suggested questions, multi-channel design (web, mobile, paper), advanced display logic, automatic translation into 44+ languages [1]. |

| Data Analysis | Descriptive statistics, thematic and sentiment analysis via AI, multivariate analyses (regression, clustering), satisfaction KPIs (NPS, CSAT) [1]. |

| Data Visualization & Reporting | Customizable reports and dashboards, real-time data updates, interactive filters, profile-based data access [1]. |

Furthermore, contemporary research emphasizes the importance of standardization and reproducibility in survey-based data collection, particularly in biomedical and clinical sciences. Frameworks like ReproSchema are designed to address inconsistencies by using a schema-centric approach, ensuring that surveys are structured, version-controlled, and interoperable across different platforms and longitudinal studies [3]. This principle is critical for drug development professionals who require high data integrity.

Proposed Protocol for Building a Standardized Survey Workflow

Here is a detailed methodology for building a reproducible survey system, integrating concepts from the search results and your requirement for a structured, visual workflow.

1. Protocol Design & Authoring

- Objective Definition: Clearly define the research hypotheses and the key constructs to be measured.

- Assessment Selection: Choose standardized, validated questionnaires from existing libraries (e.g., a clinical depression scale) to ensure data comparability. The use of a shared library is a core feature of frameworks like ReproSchema [3].

- Schema-Centric Programming: Instead of building a survey in a simple GUI, define the survey structure using a structured schema (e.g., JSON-LD). This practice forces explicit declaration of each data element, its response type, metadata, and branch logic, enhancing reproducibility [3].

- Logic and Dynamic Behavior: Implement advanced display logic. For instance, a "pass/fail" question can dynamically show or hide follow-up questions and use conditional formatting (e.g., changing the question's background color based on the response), a technique demonstrated in platforms like Survey123 [4].

2. Deployment & Data Collection

- Multichannel Distribution: Deploy the survey across appropriate channels (web links, email campaigns, integrated into mobile apps) while ensuring the user experience is consistent and functional on all devices [1].

- Quota and Anonymity Management: Actively manage response quotas to ensure a representative sample. For sensitive research, implement anonymity thresholds to suppress data from small sample sizes, a feature supported in tools like Qualtrics Stats iQ to protect participant confidentiality [5].

3. Analysis & Reporting

- Exploratory Data Analysis: Begin by using "Describe" functions to understand data distributions, identify outliers, and check for data quality issues [5].

- Statistical Relating and Modeling: Use "Relate" functions to explore bi-variate relationships. For deeper analysis, employ multivariate methods like regression or cluster analysis to identify key drivers and segment respondents [1] [5].

- AI-Powered Qualitative Analysis: For open-text responses, use text analysis tools to automatically code comments into themes and perform sentiment analysis (positive/neutral/negative) to quickly gauge respondent attitudes [1].

- Dashboard Creation: Build interactive dashboards with key performance indicators (KPIs). Ensure these dashboards are updated in real-time and can be filtered by key demographic or response variables [1].

Visual Workflow: From Protocol to Analysis

The following diagram, created with Graphviz per your specifications, illustrates the logical flow of the protocol described above.

Diagram Title: Standardized Survey Research Workflow

Recommendations for Finding Detailed Protocols

To obtain the specific application notes and technical protocols you need, I suggest the following actions:

- Consult Official Software Documentation: Directly visit the websites for platforms like Sphinx iQ, Qualtrics (Stats iQ), REDCap, and ReproSchema. Their support sites and developer portals are the most likely places to host detailed technical guides, API documentation, and white papers.

- Investigate Scientific Literature: Conduct a targeted search on academic databases like PubMed or Google Scholar using keywords such as "ReproSchema protocol," "standardized survey data collection," "REDCap implementation clinical trial," or "electronic data capture (EDC) best practices." The paper on ReproSchema is a strong indicator that such detailed resources exist in the scientific record [3].

- Explore Specialized Forums and Communities: User communities and forums for specific survey platforms (e.g., the REDCap Consortium) are invaluable resources where researchers and IT professionals share advanced techniques, custom solutions, and practical troubleshooting advice.

References

Statistical Analysis Techniques in Drug Development: Application Notes and Protocols

Introduction to Statistical Analysis in Pharmaceutical Research

The importance of statistical rigor in drug development continues to increase as methodologies advance and regulatory expectations evolve. Hypothesis testing provides framework for efficacy determination in clinical trials, regression analysis identifies and quantifies relationships between variables, and Monte Carlo simulations model uncertainty in complex biological systems. This document provides detailed application notes and standardized protocols for these three fundamental statistical techniques, with specific emphasis on implementation in pharmaceutical research settings. These protocols are designed to meet the needs of researchers, scientists, and drug development professionals requiring both theoretical understanding and practical implementation guidance [2].

Hypothesis Testing in Clinical Trials

Application Notes

Hypothesis testing serves as a cornerstone methodology for determining treatment efficacy in clinical trials. This statistical approach provides a structured framework for evaluating whether observed differences in outcomes between treatment groups represent genuine effects or random variation. In pharmaceutical development, hypothesis testing formally compares two competing statements: the null hypothesis (H₀), which typically states no difference exists between treatments, and the alternative hypothesis (H₁), which asserts that a statistically significant difference does exist [1]. For example, in a phase III clinical trial comparing a new therapeutic agent to standard care, the null hypothesis might state that the new drug shows no difference in efficacy compared to the standard treatment, while the alternative would claim superior efficacy.

The interpretation of hypothesis tests relies on p-values and significance levels, with the p-value representing the probability of observing the results if the null hypothesis were true. The conventional significance threshold (α) of 0.05 establishes a 5% risk of Type I error (falsely rejecting a true null hypothesis). Clinical trials also must consider statistical power, which represents the test's ability to correctly detect a true effect (typically targeted at 80-90%). Pharmaceutical applications extend beyond simple efficacy testing to include superiority, non-inferiority, and equivalence trials, each with specific hypothesis formulations and interpretation frameworks. Proper implementation requires careful attention to assumptions, sampling methods, and multiple testing corrections to maintain validity across complex trial designs [2].

Experimental Protocol

Table 1: Key Components of Hypothesis Testing in Clinical Trials

| Component | Description | Example in Clinical Context |

|---|---|---|

| Null Hypothesis (H₀) | Statement of no effect or no difference | New drug shows no difference in response rate compared to placebo |

| Alternative Hypothesis (H₁) | Statement contradicting H₀ | New drug shows different response rate vs. placebo |

| Significance Level (α) | Probability of Type I error (false positive) | Typically set at 0.05 (5% risk) |

| Test Statistic | Calculated value from sample data | t-statistic, z-score, or chi-square value |

| P-value | Probability of results if H₀ is true | p < 0.05 indicates statistical significance |

| Power (1-β) | Probability of correctly rejecting H₀ | Typically targeted at 80% or 90% |

Step-by-Step Implementation Protocol:

Formulate Hypotheses: Precisely define null and alternative hypotheses based on primary endpoint. For a superiority trial: H₀: μ₁ = μ₂ (no difference in means); H₁: μ₁ ≠ μ₂ (difference exists) [2] [1].

Select Significance Level: Establish α level before trial initiation, typically 0.05 for one-sided or 0.025 for two-sided tests, to control Type I error rate.

Choose Appropriate Test: Select statistical test based on data type and distribution:

- Continuous data: t-test (2 groups) or ANOVA (>2 groups)

- Categorical data: Chi-square test or Fisher's exact test

- Time-to-event data: Log-rank test

Calculate Test Statistic: Compute appropriate test statistic using sample data according to standard formulas.

Determine P-value: Compare test statistic to critical values from appropriate distribution (t, F, chi-square) to obtain p-value.

Make Decision: Reject H₀ if p-value ≤ α; otherwise, fail to reject H₀.

Regression Analysis for Drug Response Modeling

Application Notes

Regression analysis provides powerful modeling capabilities for understanding and quantifying relationships between variables in pharmaceutical research. This family of techniques examines how dependent variables (outcomes) change as independent variables (predictors) vary, allowing researchers to build predictive models of drug response, identify influential factors in treatment outcomes, and optimize formulation parameters. The core concept involves fitting a line of best fit (regression line) through observed data points to characterize relationships between variables [3] [4]. In drug development, regression might model how dosage levels (independent variable) affect therapeutic response (dependent variable), or how patient characteristics influence adverse event risk.

Different types of regression address various data structures and research questions in pharmaceutical applications. Linear regression models continuous outcomes, while logistic regression predicts categorical outcomes such as treatment success or failure. Multiple regression incorporates several predictors simultaneously, enabling researchers to control for confounding variables when assessing treatment effects. Beyond prediction, regression analysis can provide insights into mechanism of action by revealing which patient factors or drug properties most strongly influence outcomes. However, proper application requires verification of key assumptions including linearity, independence of errors, homoscedasticity, and normality of residual distributions [1].

Experimental Protocol

Table 2: Comparison of Regression Types in Pharmaceutical Research

| Regression Type | Data Structure | Pharmaceutical Application Examples |

|---|---|---|

| Simple Linear | One continuous independent variable, one continuous dependent variable | Dose-response relationships, bioavailability vs. dosage form |

| Multiple Linear | Multiple independent variables, one continuous dependent variable | Predicting efficacy based on dosage, patient age, and genetic markers |

| Logistic | Categorical dependent variable (binary or ordinal) | Predicting treatment success/failure based on patient characteristics |

| Polynomial | Non-linear relationships between variables | Modeling complex dose-response curves with saturation effects |

| Cox Proportional Hazards | Time-to-event data | Survival analysis in oncology trials |

Step-by-Step Implementation Protocol:

Define Research Question and Variables: Identify dependent variable (outcome of interest) and independent variable(s) (predictors). Clearly specify the expected relationship based on biological plausibility [3] [4].

Select Appropriate Regression Model: Choose regression type based on nature of dependent variable:

- Continuous outcome: Linear regression

- Binary outcome: Logistic regression

- Time-to-event: Cox regression

- Count data: Poisson regression

Assess Model Assumptions: Verify key assumptions before interpretation:

- Linearity: Relationship between variables is linear (for linear regression)

- Independence: Observations are independent

- Homoscedasticity: Constant variance of errors

- Normality: Residuals normally distributed

- Multicollinearity: Predictors not highly correlated

Parameter Estimation: Calculate regression coefficients using ordinary least squares (linear) or maximum likelihood estimation (nonlinear). The core equation for multiple linear regression is: Y = β₀ + β₁X₁ + β₂X₂ + ... + βₖXₖ + ε, where Y is the dependent variable, β₀ is the intercept, β₁-βₖ are coefficients for each independent variable, and ε is the error term [3].

Model Validation: Evaluate model fit using appropriate statistics:

- R²: Proportion of variance explained (linear regression)

- Hosmer-Lemeshow test: Goodness of fit (logistic regression)

- AIC/BIC: Model comparison with penalty for complexity

Results Interpretation: Interpret coefficients in context of research question, considering both statistical significance and clinical relevance. For linear regression, coefficients represent the change in dependent variable per unit change in predictor.

Prediction and Application: Use validated model for prediction within range of observed data, with appropriate confidence intervals.

Monte Carlo Simulation for Risk Assessment

Application Notes

Monte Carlo simulation represents a computational algorithm that uses repeated random sampling to model phenomena with significant uncertainty, making it particularly valuable for risk assessment in drug development. This technique allows researchers to quantify uncertainty in complex systems by generating probability distributions for potential outcomes rather than single-point estimates. By running thousands or millions of simulated experiments, Monte Carlo methods provide a comprehensive view of possible scenarios and their associated probabilities, enabling more informed decision-making under uncertainty [3] [4]. In pharmaceutical contexts, this approach helps model the propagation of uncertainty through complex biological systems and development processes.

The applications of Monte Carlo simulation in drug development are diverse and impactful. Clinical trial planning uses these methods to model patient recruitment rates, dropout patterns, and potential treatment effect sizes. Pharmacokinetic/pharmacodynamic (PK/PD) modeling applies Monte Carlo techniques to simulate drug concentration-time profiles and effect responses across virtual patient populations. Manufacturing quality risk assessment utilizes simulation to model the impact of process variability on critical quality attributes. The primary advantage lies in the ability to model complex, multi-factorial systems where analytical solutions are impossible or impractical, providing a comprehensive risk profile that supports robust decision-making [3].

Experimental Protocol

Table 3: Monte Carlo Simulation Applications in Drug Development

| Application Area | Input Uncertainties | Output Metrics |

|---|---|---|

| Clinical Trial Planning | Recruitment rate, dropout rate, treatment effect size | Probability of trial success, expected sample size, power estimation |

| PK/PD Modeling | Clearance, volume of distribution, receptor affinity | Probability of target attainment, expected efficacy, toxicity risk |

| Pharmacoeconomics | Drug efficacy, treatment duration, healthcare costs | Cost-effectiveness ratios, budget impact, value-based pricing |

| Manufacturing Quality | Process parameters, raw material attributes | Probability of meeting specifications, quality risk assessment |

| Portfolio Management | Technical success rates, development timelines, market size | Expected net present value, resource requirements, pipeline risk |

Step-by-Step Implementation Protocol:

Define Modeling Objectives: Clearly specify the output variables of interest and decision context. Determine what uncertainties need to be quantified and how results will inform decisions [3] [4].

Develop Mathematical Model: Create a computational model representing the system using relevant input-output relationships. This may involve:

- Pharmacokinetic models (e.g., compartmental models)

- Clinical outcome equations

- Cost-effectiveness frameworks

- Manufacturing process models

Characterize Input Uncertainties: Define probability distributions for all uncertain input parameters:

- Normal distribution: Symmetric uncertainty

- Lognormal distribution: Positive-skewed parameters

- Uniform distribution: Bounded uncertainty with equal probability

- Triangular distribution: Bounded uncertainty with central tendency

- Beta distribution: Probabilities and proportions

Generate Random Samples: Use random number generation to create input values from specified distributions. Sample size typically ranges from 10,000 to 100,000 iterations for stable results.

Run Model Iterations: Execute the mathematical model for each set of randomly sampled inputs, recording output values for each iteration.

Analyze Output Distribution: Aggregate results from all iterations to build probability distributions for output metrics:

- Calculate mean, median, and percentiles

- Determine probabilities of critical outcomes

- Identify key drivers of uncertainty through sensitivity analysis

Interpret and Apply Results: Translate simulation findings into risk assessments and development decisions. Communicate results using appropriate visualizations (histograms, cumulative distribution plots, tornado diagrams).

Conclusion and Implementation Considerations

The three statistical techniques detailed in these application notes provide complementary capabilities for addressing different challenges in pharmaceutical research and development. Hypothesis testing offers a rigorous framework for efficacy determination in clinical trials, regression analysis enables relationship modeling and prediction across various development stages, and Monte Carlo simulation provides powerful uncertainty quantification for risk assessment and decision support. Mastery of these methods enhances the quality, efficiency, and regulatory acceptability of drug development programs.

Successful implementation requires integration of statistical thinking throughout the development lifecycle, from early discovery through post-marketing surveillance. Researchers should consider method selection criteria, assumption verification, and appropriate interpretation of results within both statistical and clinical contexts. Additionally, documentation practices must support regulatory submissions by clearly describing methodologies, justifications for approach selection, and comprehensive results reporting. As drug development continues to evolve with advances in personalized medicine and complex therapeutics, these foundational statistical methods will remain essential tools for transforming data into evidence-based development decisions [2] [1].

References

- 1. 3 Statistical Analysis Methods You Can Use to Make Business ... [online.hbs.edu]

- 2. 7 Statistical Analysis Methods Beginners Should Know [coursera.org]

- 3. Data Analysis Methods: 7 Essential Techniques for 2025 [atlan.com]

- 4. The 7 Most Useful Data Analysis Techniques [2025 Guide] [careerfoundry.com]

Understanding the Research Terminology

Your topic combines two distinct concepts. Here’s a breakdown to clarify:

- IQGAP3: This is a scaffold protein significantly overexpressed in various cancers. Research focuses on its role in promoting tumor growth, metastasis, and therapy resistance through specific signaling pathways, making it a potential therapeutic target [1] [2].

- Mixed-Methods Research: This is a methodology that intentionally integrates qualitative and quantitative approaches within a single study to provide a holistic understanding of a research question [3] [4]. It is commonly used in social, behavioral, and health sciences, including intervention studies, but is not a standard approach in fundamental molecular biology research that characterizes IQGAP3 function [5].

Since your request for "Application Notes and Protocols" is best suited to the IQGAP3 research, the following section details its role in cancer biology.

Application Notes: The Oncogenic Role of IQGAP3

IQGAP3 is an important scaffold protein that facilitates cancer progression by regulating key cellular signaling pathways. The table below summarizes its functions and mechanisms based on recent studies.

| Cancer Type | Primary Function of IQGAP3 | Key Signaling Pathways & Effectors | Cellular & Clinical Outcomes |

|---|---|---|---|

| Gastric Cancer | Serves as a hub for signal transduction, mediating crosstalk between cancer cells and the tumor microenvironment [1]. | KRAS, MEK/ERK, TGF-β/SMAD [1]. | Enhanced tumorigenesis, lung metastasis, and establishment of functional heterogeneity within the tumor [1]. |

| Lung Cancer | Promotes stemness, metastasis, and radiation resistance [2]. | Hedgehog signaling, GLI1 transcription factor [2]. | Increased migration, invasion, sphere-forming capability, and reduced patient survival [2]. |

| Head & Neck Cancer (Related protein IQGAP1) | Scaffolds the PI3K/AKT/mTOR signaling pathway [6]. | PI3K, AKT [6]. | Increased cell survival, proliferation, and carcinogenesis; high expression correlates with poor survival [6]. |

Experimental Protocols for IQGAP3 Research

Below are detailed methodologies for key experiments used to elucidate IQGAP3's function in the cited studies.

Gene Knockdown using siRNA

- Purpose: To investigate the functional consequences of reducing IQGAP3 expression in cancer cells.

- Procedure:

- Cell Culture: Maintain relevant cancer cell lines (e.g., A549, H1299 for lung cancer; NUGC3, AGS for gastric cancer) in appropriate media [1] [2].

- Transfection: Plate cells in multi-well plates and transfert with IQGAP3-specific small interfering RNA (siRNA) using a transfection reagent like Lipofectamine RNAiMAX [1] [2].

- Incubation: Replace the medium after 5 hours and incubate the cells for 24-72 hours before harvesting for further analysis [2].

- Analysis: Assess knockdown efficiency via Western Blotting or RT-qPCR and evaluate phenotypic changes in functional assays.

Western Blotting

- Purpose: To detect and quantify protein expression levels (e.g., IQGAP3, GLI1, pathway phosphoproteins).

- Procedure:

- Protein Extraction: Lyse harvested cells or tissue samples in RIPA or other suitable lysis buffer.

- Electrophoresis: Load equal amounts of protein onto an SDS-PAGE gel to separate proteins by size.

- Transfer: Transfer proteins from the gel to a PVDF membrane.

- Blocking and Antibody Incubation: Block the membrane with non-fat milk, then incubate with a primary antibody (e.g., anti-IQGAP3, anti-GLI1) overnight at 4°C. The next day, incubate with an HRP-conjugated secondary antibody [2].

- Detection: Visualize protein bands using an ECL-plus kit and a chemiluminescence imaging system [2].

RNA Sequencing and Transcriptomic Analysis

- Purpose: To identify global changes in gene expression resulting from IQGAP3 knockdown.

- Procedure:

- RNA Extraction: Extract total RNA from control and IQGAP3-knockdown cells using a commercial kit [1].

- Library Preparation and Sequencing: Prepare sequencing libraries and perform RNA-sequencing on a platform like Illumina.

- Bioinformatic Analysis: Use Gene Set Enrichment Analysis (GSEA) to identify signaling pathways that are significantly altered upon IQGAP3 depletion (e.g., KRAS signaling, TGF-β signaling) [1].

Visualizing IQGAP3 Signaling Pathways

The following diagram illustrates the key signaling pathways mediated by IQGAP3 in gastric and lung cancer, as identified in the research.

This diagram highlights IQGAP3's role as a central regulator of oncogenic signaling. In gastric cancer, it activates the KRAS-MEK-ERK and TGF-β-SMAD axes to promote a permissive tumor microenvironment and functional heterogeneity [1]. In lung cancer, it acts upstream of the Hedgehog signaling pathway, leading to the stabilization of the GLI1 transcription factor, which drives stemness and metastasis [2].

A Note on Mixed-Methods Research Design

While mixed-methods research may not be directly applicable to the basic science of IQGAP3, it is a powerful framework in intervention and clinical research. If your work progresses to evaluating a therapeutic targeting IQGAP3 in a population, this approach would be valuable. The core designs are [3] [4]:

- Explanatory Sequential: Start with quantitative data (e.g., a clinical trial measuring tumor size), then use qualitative data (e.g., patient interviews) to explain the quantitative results.

- Exploratory Sequential: Begin with qualitative data (e.g., focus groups with clinicians) to explore a problem, and use the findings to develop a quantitative tool or intervention (e.g., a large-scale survey).

- Convergent Parallel: Collect quantitative and qualitative data simultaneously and merge the results to get a complete picture.

Key Research Gaps and Future Directions

- Therapeutic Development: The strong oncogenic role of IQGAP3 makes it a compelling target, but the development of specific small-molecule inhibitors or other therapeutic modalities is still an active area of research.

- Cross-Talk Mechanisms: Further investigation is needed to fully understand how IQGAP3-mediated pathways (like RAS-ERK and TGF-β) interact with other signaling networks in different cancer types.

- Translational Studies: Research is needed to bridge the gap between these molecular findings and clinical applications, where mixed-methods research could indeed play a role in understanding implementation barriers and patient experiences.

References

- 1. IQGAP3 signalling mediates intratumoral functional ... [pmc.ncbi.nlm.nih.gov]

- 2. IQGAP3 activates Hedgehog signaling to confer stemness ... [nature.com]

- 3. - Mixed : Combining Qualitative and Quantitative Data Methods Research [nngroup.com]

- 4. Guide With Examples Mixed Methods Research [dovetail.com]

- 5. Use of mixed methods research in intervention studies to ... [pmc.ncbi.nlm.nih.gov]

- 6. A PI3K/AKT Scaffolding Protein, IQ motif-containing ... [pmc.ncbi.nlm.nih.gov]

A Framework for Application Notes & Protocols

For researchers and scientists, a well-structured document is crucial for reproducibility and clarity. Here is a suggested outline you can adapt once you have your specific data:

- 1. Title and Abstract: A concise summary of the application, key findings, and conclusions.

- 2. Introduction: Background on the scientific problem, the technology or method used (e.g., the assay, instrument, or software), and the objectives of the note.

- 3. Materials and Methods: A detailed description of the experimental protocol.

- 4. Results and Data Analysis: Presentation of the findings, including all figures, tables, and graphs.

- 5. Discussion: Interpretation of the results and their significance.

- 6. Conclusions and References.

Experimental Protocol: Detailed Methodology

The "Materials and Methods" section should be detailed enough for another professional to replicate the work. A generic template is provided below, which you should fill with your specific experimental details.

Table 1: Generic Experimental Protocol Template

| Step | Component | Specification / Description | Purpose / Rationale |

|---|---|---|---|

| 1 | Sample Preparation | (e.g., Cell line, concentration, treatment conditions) | To establish the baseline biological system for testing. |

| 2 | Assay Procedure | (e.g., Kit name, catalog number, incubation times) | To measure the specific target or activity of interest. |

| 3 | Data Acquisition | (e.g., Instrument name, settings, software version) | To generate raw quantitative data for analysis. |

| 4 | Data Analysis | (e.g., Statistical tests, software used, normalization method) | To interpret raw data and derive significant results. |

| 5 | Quality Control | (e.g., Controls used, acceptance criteria) | To ensure the validity and reliability of the experimental data. |

Data Presentation: Structured Tables for Quantitative Data

Presenting data in clearly structured tables allows for easy comparison. Below is a template for how you might structure your quantitative results.

Table 2: Template for Presenting Quantitative Experimental Results

| Experimental Group | Parameter A (Mean ± SD) | Parameter B (Mean ± SD) | p-value | Statistical Test |

|---|---|---|---|---|

| Control Group | (Value) | (Value) | -- | -- |

| Treatment Group 1 | (Value) | (Value) | (Value) | e.g., Student's t-test |

| Treatment Group 2 | (Value) | (Value) | (Value) | e.g., One-way ANOVA |

Graphviz Visualization: Protocols & Workflows

For creating diagrams of signaling pathways or experimental workflows, here are key Graphviz techniques based on your specifications.

Graphviz Configuration Guide

The following tips are compiled from Graphviz documentation and user forums [1] [2] [3]:

- HTML-like Labels: For advanced text formatting within a node (like multiple colors or fonts), use HTML-like labels with

tags [3] [4]. - Label Placement: Use the

labeldistanceattribute to control the distance of an edge's label from the node. A value greater than2.0will create a more noticeable gap, improving readability [2] [5]. - Node and Text Contrast: To ensure high contrast, always explicitly set the

fontcolorandfillcolorattributes for nodes. Thestyle=filledattribute is also often necessary [4]. - Fixed Node Sizes: Using

fixedsize=trueand settingwidthandheightcan help create a more uniform and aligned graph layout [2] [4].

Diagram 1: Conceptual Experimental Workflow

This diagram outlines a generic high-level workflow for a research project.

Title: Generic Experimental Workflow and Data Analysis Pipeline

Diagram 2: Signaling Pathway Logic

This diagram illustrates a simplified and hypothetical signaling pathway based on a feedback mechanism.

Title: Simplified Signaling Pathway with Feedback Loop

How to Find Specific "IQ-3" Information

To locate the information you need, I suggest the following steps:

- Refine Your Search Terms: The term "this compound" is likely a product name or model number. Try searching for the full, precise name of the technology combined with terms like "technical datasheet," "application note," "user manual," or "protocol." Include the manufacturer's name if you know it.

- Consult Scientific Databases: Search specialized databases like PubMed, Google Scholar, or manufacturer websites for published papers or technical documentation that cite the specific "this compound" platform.

- Verify the Context: Ensure that the "this compound" you are researching is indeed related to data visualization or analysis in drug development, and not one of the unrelated products identified in the search.

References

An Overview of "iQ" Software for Research

The table below summarizes the three primary "iQ" software packages identified, helping you distinguish their core applications:

| Software Name | Primary Function | Key Application Areas |

|---|---|---|

| Qualtrics Text iQ [1] [2] | Advanced Text Analysis | Customer Experience (CX), Employee Experience (EX), Market Research |

| Sphinx iQ3 [3] | Comprehensive Survey Platform | Survey programming, data collection, statistical analysis, text analysis |

| Andor iQ3 [4] | Multi-Dimension Image Acquisition | Scientific imaging and spectroscopy, live cell biology |

For analyzing open-ended text responses from surveys or research data, Qualtrics Text iQ and the text analysis features within Sphinx iQ3 are the most directly relevant options [3] [1] [2].

Protocol for Text Analysis with Qualtrics Text iQ

For researchers using Qualtrics Text iQ, the process involves setup, topic modeling, and analysis.

Workflow Diagram

The following diagram illustrates the core workflow for a text analysis project:

Phase 1: Survey Design and Data Collection

Proper data collection is foundational. Adhering to best practices at this stage ensures higher quality data for analysis [1]:

- Question Design: Use the "essay" question variation for long responses and "single line" for short answers.

- Avoid Bias: Do not use "force response" on text entry questions, as this can lead to non-meaningful answers like "N/A".

- Question Focus: Ask only one question per text entry to avoid confusion and simplify analysis.

- Prevent Fatigue: Limit the number of text entry questions in a survey to reduce respondent dropout.

Phase 2: Developing a Topic Model

Creating a model to categorize comments into topics is an iterative process. The table below outlines three complementary approaches [1]:

| Approach | Description | When to Use |

|---|---|---|

| Top-Down | Create topics based on pre-existing hypotheses or industry-standard starter packs. | When you have clear expectations about the themes that will appear. |

| Bottom-Up | Read through a sample of responses first to identify emergent trends, then build topics to match. | When exploring new areas without strong prior assumptions. |

| Automatic | Use Qualtrics' AI to analyze responses and recommend topics and structures. | To speed up the initial model creation or to validate manually created topics. |

After creating initial topics, you can refine the model by creating new topics for untagged comments or adjusting queries to capture missed relevant comments [1].

Phase 3: Analysis, Visualization, and Insight Generation

Once your topic model is stable, you can use various widgets and filters to gain insights [1]:

- Bubble Chart Widget: Visualize all topics, where bubble size indicates the volume of comments and color indicates average sentiment.

- Filtering: Click on a topic bubble to filter the entire dashboard, allowing you to see metrics like NPS for a specific issue.

- Trend Analysis: Use line charts to track how the volume or sentiment of a topic changes over time.

- Drill-Down: Read individual comments within a filtered topic to understand the context behind the quantitative data.

Best Practices for Text Analysis Projects

Beyond specific software, these general practices can improve any text analysis project [5]:

- Define Goals First: Clearly identify the goals of your analysis before collecting data. This determines the method, the amount of data needed, and the sampling plan.

- Plan Your Data Sampling: It is often more effective to use a representative sample of your data than to attempt analyzing an entire massive dataset. Consider:

- Random Sampling: Randomly selecting a subset of documents from the entire dataset.

- Stratified Sampling: Dividing the dataset into distinct groups (e.g., by customer type or region) and then randomly sampling from each group.

- Understand Saturation: In text analysis, adding more data eventually stops providing new insights. Start with a mid-sized dataset and expand only if necessary.

Specialized Software for Other Research Domains

The other "iQ" software packages cater to different scientific fields [3] [4] [6]:

- Sphinx iQ3 is a full-spectrum survey solution that also includes text analysis capabilities, allowing for thematic analysis and automatic coding of comments using AI [3].

- Andor iQ3 is designed for multi-dimensional image acquisition in microscopy, featuring a workflow-oriented interface for complex imaging protocols in fields like cell biology [4].

- For researchers in fields like cancer biology, constructing and analyzing signal transduction pathways is a key task. This often involves using specialized systems biology tools and databases (e.g., KEGG, Reactome, STRING) to generate networks from high-throughput "omics" data [6].

References

- 1. Text iQ Best Practices [qualtrics.com]

- 2. Text iQ Functionality [qualtrics.com]

- 3. Advanced survey software: iQ3 [lesphinx.es]

- 4. Andor launches iQ3 Multi-Dimension Image Acquisition ... [selectscience.net]

- 5. Analyzing Text Data: Text Analysis Methods - Research Guides [libguides.gwu.edu]

- 6. Databases and tools for constructing signal transduction ... [pmc.ncbi.nlm.nih.gov]

Application Notes: Reflexive Thematic Analysis in Qualitative Research

Thematic Analysis (TA) is a foundational method for identifying, analyzing, and reporting patterns (themes) within qualitative data. It is widely used in psychology, healthcare, social sciences, and customer experience research to gain deep insights into complex human experiences and perspectives [1]. The following protocol details the widely-cited six-phase approach to Reflexive Thematic Analysis as outlined by Braun and Clarke [1].

Experimental Protocol: The Six-Phases of Reflexive Thematic Analysis

The workflow for conducting a Reflexive Thematic Analysis is iterative and can be visualized as follows:

Diagram 1: The iterative workflow of Reflexive Thematic Analysis.

The table below provides the objectives and detailed methodologies for each phase.

Table 1: Protocol for Conducting Reflexive Thematic Analysis

| Phase | Objective | Detailed Methodology |

|---|---|---|

| 1. Familiarization | To immerse in the data and gain a deep understanding of its content. | Repeatedly and actively read the entire dataset (e.g., interview transcripts, survey responses). Take initial, unstructured notes and jot down early ideas for codes [1]. |

| 2. Generating Codes | To systematically identify and label noteworthy features across the entire dataset. | Work through the dataset line-by-line or segment-by-segment. Apply concise, descriptive labels (codes) to data items that are relevant to the research question. Code inclusively and comprehensively at this stage [1]. |

| 3. Searching for Themes | To group related codes into broader, meaningful patterns. | Analyze the generated codes and group them into candidate themes. Consider how different codes may combine to form an overarching theme. Create initial thematic maps to visualize relationships [1]. |

| 4. Reviewing Themes | To refine the candidate themes, ensuring they accurately represent the dataset. | Check if the candidate themes form a coherent pattern. Review the coded data extracts for each theme to assess if they support the theme. This may involve splitting, combining, or discarding themes [1]. |

| 5. Defining Themes | To articulate the essence and scope of each final theme. | Conduct a detailed analysis of each theme to determine the core story it tells. Generate a clear name and a detailed definition for each theme, describing its scope and relevance [1]. |

| 6. Producing the Report | To present the analysis in a scholarly report. | Weave the analytic narrative together with vivid, compelling data extracts. Finalize the analysis by contextualizing the findings within existing literature and clearly explaining the significance of the themes [1]. |

Leveraging Software and AI in Thematic Analysis

Diagram 2: The role of AI and software in supporting Thematic Analysis.

Table 2: Overview of Common Qualitative Data Analysis Software (QDAS)

| Software | Key Features | Potential Application in TA |

|---|---|---|

| NVivo | A comprehensive platform supporting various data types and analysis approaches [1]. | Managing large datasets, complex coding, querying, and organizing themes. |

| MAXQDA | User-friendly interface with strong mixed-methods capabilities and AI features [1]. | Streamlining the coding process, inter-coder reliability checks, and visual tools. |

| InfraNodus | Focuses on data visualization and AI thematic analysis by building knowledge graphs [1]. | Generating initial thematic maps, identifying central concepts, and revealing gaps in the data. |

| ATLAS.ti | Powerful tools for coding, visualizing relationships, and incorporating AI [1]. | Deep analysis of text, multimedia data, and exploring connections between codes. |

A Note on IQGAP3 in Cancer Research

If your query was related to the protein IQGAP3, it is a significant oncogene studied in cancer biology, not a qualitative research method. Recent studies highlight its role as a scaffold protein that promotes cancer stemness, metastasis, and therapy resistance.

- Key Signaling Pathways: IQGAP3 has been shown to activate the Hedgehog signaling pathway by regulating its key effector, GLI1, to promote stemness in non-small-cell lung cancer (NSCLC) [2]. Concurrently, it promotes the Wnt/β-catenin signaling pathway by disrupting the Axin1-CK1α interaction, leading to β-catenin stabilization and increased proliferation in gastric cancer [3].

- Experimental Techniques: Common protocols to study IQGAP3 include cell transfection with specific siRNAs, Western blotting, co-immunoprecipitation (Co-IP) to identify protein-protein interactions, and RNA sequencing to identify downstream effectors [2] [3].

The signaling role of IQGAP3 in cancer can be summarized as follows:

Diagram 3: IQGAP3 promotes cancer progression through multiple signaling pathways.

Key Takeaways for Researchers

- Choose Your Framework: Decide between an inductive (data-driven) or deductive (theory-testing) approach to Thematic Analysis early on, as this will guide your entire coding process [1].

- Embrace Reflexivity: Acknowledge your active role as a researcher in interpreting the data. Documenting your assumptions and decisions enhances the transparency and rigor of your analysis [1].

- Leverage Technology: Use QDAS tools not just for organization, but for deeper exploration. AI-powered visualization can help identify non-obvious patterns and connections in your data [1].

References

Application Note: A Researcher's Guide to Modern Survey Distribution Methods

Introduction

For professionals in drug development, collecting high-quality data from patients, healthcare providers, and the public is paramount. This data collection often relies on surveys for patient-reported outcomes (PROs), satisfaction studies, and market research. The distribution channel used directly impacts response rates, data quality, and ultimately, the reliability of the study results [1] [2].

This application note provides a detailed overview of current survey distribution methods. It is designed to help research teams make evidence-based decisions, implement these methods effectively, and integrate them into their clinical and research protocols.

Summary of Distribution Methods & Comparative Analysis

A multi-channel approach is often necessary to reach a diverse participant population. The table below summarizes the key characteristics of prevalent distribution methods to facilitate comparison and initial selection.

Table 1: Comparative Analysis of Survey Distribution Methods for Clinical Research

| Method | Best Use Cases in Clinical Research | Estimated Response Rate/Engagement | Relative Cost | Key Advantage | Primary Limitation |

|---|---|---|---|---|---|

| Email [3] [2] | Patient follow-ups, PROs, satisfaction surveys, stakeholder (KOL) engagement | Varies by relationship; highly dependent on subject line and list quality [3] | Low | Direct, personalized communication; easy to track [3] | High risk of being missed or marked as spam [3] [2] |

| Embedded Website [1] [2] | Capturing real-time feedback from site visitors, usability testing of patient portals | High for short, triggered surveys; contextual feedback [1] | Low | Captures in-the-moment feedback with high context [1] | Can be intrusive if not implemented carefully [2] |

| Social Media [1] [3] | Broad market research, patient recruitment for non-interventional studies, brand perception | High potential reach; engagement varies by platform and content [1] | Low to High (with ads) | Unparalleled reach and advanced demographic targeting [1] | Difficult to stand out; audience may not be representative [3] |

| SMS/WhatsApp [1] [2] | Post-visit feedback, medication adherence prompts, quick check-ins | Very high; ~90% of SMS opened within 3 mins [3]; 80% of WhatsApp msgs read in 5 mins [2] | Low | High open rates and immediacy; conversational [2] | Character limits; requires consent; not for complex surveys [2] |

| QR Codes [3] [2] | In-clinic feedback, conference/event data collection, physical marketing materials | Growing in popularity (scans quadrupled in 2024) [2] | Very Low | Effortless bridge between physical and digital channels [3] | Requires smartphone and user knowledge [3] [2] |

Detailed Experimental Protocols for Implementation

For a research study to be reproducible and compliant, the methods must be clearly defined. Below are detailed protocols for two high-impact distribution methods.

Protocol 1: Distributed Email Survey for Longitudinal Patient-Reported Outcomes

- Objective: To collect PRO data from a cohort of clinical trial patients at scheduled intervals via email.