OF-1

Content Navigation

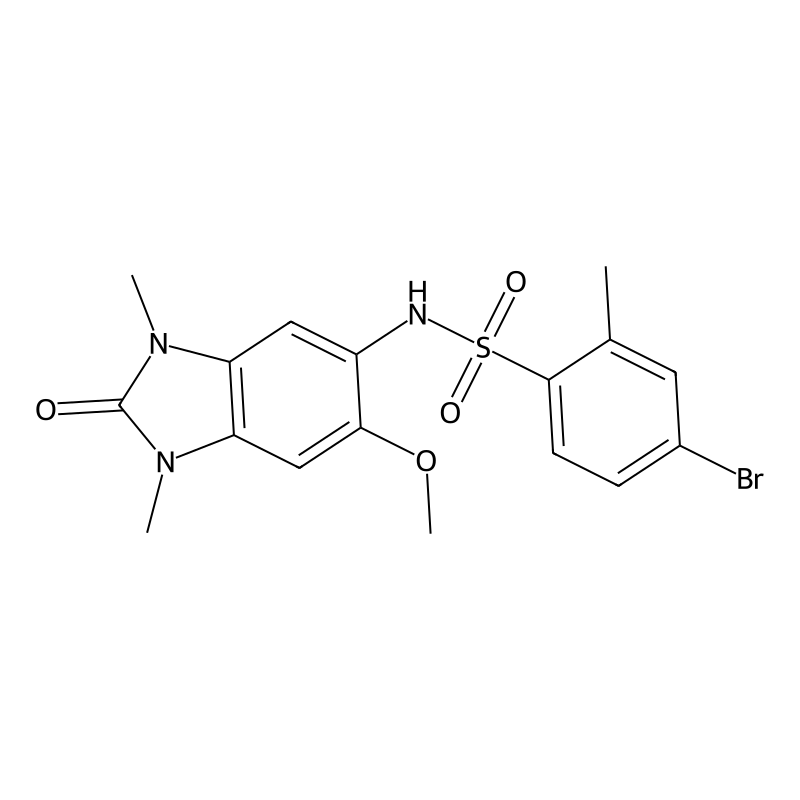

CAS Number

Product Name

IUPAC Name

Molecular Formula

Molecular Weight

InChI

InChI Key

SMILES

solubility

Synonyms

Canonical SMILES

A Framework for N-of-1 Trials of Individualized Therapies

An N-of-1 trial in this context refers to a single-case experimental design for a therapy tailored to a patient's unique genetic variant, which may be found in only one or a few individuals [1]. This is distinct from traditional trials that test a single drug on a group of patients.

The core challenge is maintaining scientific rigor when the entire trial is built around a single participant. The following framework outlines the key considerations for such a trial design [1].

| Trial Component | Key Considerations for N-of-1 Design |

|---|---|

| Natural History | Collecting comprehensive, individualized baseline data on the patient's disease trajectory before treatment is fundamental for comparison [1]. |

| Clinical Outcome Assessments (COAs) | Selecting measures individualized to the patient's genotype and phenotype. They should capture function, development, and behavior, and be meaningful to the patient's quality of life [1]. |

| Defining Meaningful Change | Establishing a Minimal Clinically Important Difference (MCID) is challenging. Use pre-treatment data to understand stability and variability of measures, and employ qualitative interviews to define a meaningful change a priori [1]. |

| Safety Monitoring | Tailored to the known or suspected toxicity profiles of both the specific investigational agent and its broader therapeutic class (e.g., specific blood tests for ASOs) [1]. |

Preclinical Models for Targeted Therapy Development

Before an individualized therapy can enter an N-of-1 clinical trial, it undergoes extensive preclinical testing. A modern, effective approach leverages multiple models in an integrated pipeline to build a robust case for efficacy and to discover predictive biomarkers [2].

The diagram below illustrates this multi-stage, integrated workflow for preclinical discovery and biomarker development.

Integrated preclinical workflow and biomarker development strategy [2].

The table below details the role of each major model in this integrated pipeline.

| Preclinical Model | Role in Drug Discovery Pipeline | Key Applications |

|---|---|---|

| Cell Lines | A versatile, quick, and relatively low-cost platform for initial high-throughput screening [2]. | Drug efficacy and cytotoxicity screening; in vitro drug combination studies; initial biomarker hypothesis generation [2]. |

| Organoids | 3D models grown from patient tumor samples that more faithfully recapitulate the tumor's features. They are a bridge between cell lines and in vivo models [2]. | Investigate drug responses; evaluate immunotherapies; safety studies; predictive biomarker identification and refinement [2]. |

| PDX Models | Created by implanting patient tumor tissue into mice, they are considered the "gold standard" for clinical relevance in preclinical research [2]. | Biomarker discovery and final validation; clinical stratification; exploring new indications; most accurate prediction of clinical outcomes before human trials [2]. |

Navigating the Discovery Process

The path to an individualized therapy is complex. Here are some key steps and resources:

- Focus on the Clinical Framework First: Since the "OF-1 discovery" phase is highly specific to the target and modality, a practical approach is to first fully define the intended clinical trial. Understanding the required clinical endpoints and evidence of efficacy can inform the necessary preclinical experiments [1].

- Consult Authoritative Sources: For the latest technical standards, consult resources like the FDA's guidance on "Individualized Antisense Oligonucleotide Drug Products" and publications from institutions like the National Center for Advancing Translational Sciences (NCATS), which focuses on platform approaches for rare diseases [1] [3].

- Embrace an Integrated Approach: Relying on a single preclinical model is insufficient. A holistic strategy that leverages the strengths of cell lines, organoids, and PDX models in sequence is crucial for building a robust data package and reducing the high attrition rates in drug development [2].

References

OF-1 mechanism of action

What are N-of-1 Trials?

An N-of-1 trial is a clinical study design in which a single patient is the sole unit of observation and serves as their own control [1]. The core mechanism involves:

- Intra-patient Comparison: The patient receives different treatments or a placebo in a sequential, randomized order. This allows for a direct comparison of the effects within the same individual, eliminating the variability that arises when comparing different people in traditional trials [1].

- A Shift in Model: This approach represents a fundamental departure from the traditional "drug-centric" model of drug development to a "patient-centric" model. It is particularly aligned with the goals of precision oncology, which aims to match specific drugs to a patient's unique tumor profile [1].

The following diagram illustrates the conceptual workflow of an N-of-1 trial in the context of precision oncology.

N-of-1 Trials in Action: Oncology Case Studies

N-of-1 trials have been successfully implemented to fast-track the development of targeted cancer therapies. The table below summarizes two prominent examples.

| Drug (Case Study) | Patient Profile | N-of-1 Trial Design & Intervention | Key Outcomes & Role of N-of-1 |

|---|---|---|---|

| Selitrectinib (LOXO-195) [1] | Patients with TRK fusion-positive cancers who developed acquired resistance (G595R/G623R mutations) to larotrectinib. | Single-patient protocol with intrapatient dose escalation guided by real-time pharmacokinetics [1]. | Achieved sustained clinical/radiologic response. The tolerated dose (100 mg BID) and efficacy were confirmed in this single-patient setting, accelerating development [1]. |

| Selpercatinib (LOXO-292) [1] | Patients with RET-altered cancers (mutation/fusion) who progressed on multiple therapies and developed a gatekeeper mutation (RETV804M). | Single-patient protocol with pharmacokinetic-guided dose escalation at ≥7-day intervals based on a predefined protocol [1]. | Rapid symptomatic and radiologic response, including regression of brain metastases. The N-of-1 trial identified the effective dose (160 mg), which later became the approved dose. It also uncovered novel solvent-front resistance mutations (G810S, Y806C), guiding the development of next-generation RET inhibitors [1]. |

N-of-1 vs. Traditional Clinical Trial Designs

The patient-centric nature of N-of-1 trials offers distinct advantages and disadvantages compared to traditional population-based designs.

| Feature | N-of-1 Trials | Traditional Population Trials (e.g., 3+3 design) |

|---|---|---|

| Unit of Analysis | Single patient [1] | Group of patients |

| Core Principle | Patient-as-own-control; intra-patient comparison [1] | Inter-patient comparison; group averages |

| Primary Advantage | Eliminates inter-patient heterogeneity bias; ideal for rare mutations; fast-tracks development for a single patient [1] | Established regulatory pathway; assesses population-level effects and safety |

| Key Challenge | Ethical/logistical issues with washout in metastatic cancer; dynamic disease progression; variable time to response [1] | Long patient recruitment; some cohorts may receive non-therapeutic doses; molecular heterogeneity can obscure results [1] |

| Model | Patient-centric [1] | Drug-centric [1] |

A Framework for Experimental Protocols

While specific protocols depend on the drug and disease, key elements for a robust N-of-1 trial protocol in drug development include [1] [2] [3]:

- Pre-Trial Foundation: This includes genomic profiling of the patient's tumor to identify a targetable molecular lesion and a strong preclinical rationale showing the drug's activity against that specific target or resistance mutation [1].

- Structured Dosing Protocol: The protocol must define a clear plan for intrapatient dose escalation, including the starting dose, escalation steps, and criteria for increasing the dose (e.g., based on pharmacokinetic data and tolerability at predefined intervals) [1].

- Multimodal Response Monitoring: A comprehensive monitoring plan is critical. This should include:

- Clinical and symptomatic assessment.

- Radiologic imaging (e.g., CT/MRI) for tumor measurement.

- Pharmacokinetic (PK) sampling to guide dosing.

- Biomarker analysis (e.g., circulating tumor DNA) to monitor molecular response and emerging resistance [1].

- Data Aggregation: Although focused on a single patient, aggregating data from multiple N-of-1 trials can provide powerful insights into a drug's general efficacy, optimal dosing, and common resistance mechanisms [1].

Important Considerations for Researchers

- Ethical and Practical Challenges: N-of-1 designs can be challenging in oncology. Using a placebo or washout period in patients with rapidly progressive, metastatic cancer is often not feasible. Furthermore, the time for a drug to produce a response can vary, complicating the sequential treatment model [1].

- The Role of Mechanism of Action (MoA): Understanding a drug's molecular target and MoA is highly valuable in N-of-1 trials. It allows for a rational patient selection strategy and provides a biological framework for interpreting both response and resistance, as seen in the RET inhibitor case [4]. However, for complex diseases, phenotypic screens (observing a desired effect in a cellular context) without a known MoA can also be a valid starting point for discovery [4].

References

Understanding N-of-1 Trials in Research

Personalized (N-of-1) trials are multiple-time-period, active-comparator crossover trials that are frequently randomized and can be masked [1]. They represent an alternative to conventional randomized controlled trials (RCTs) by focusing on identifying the optimal treatment for a single individual or research subject, rather than estimating an average effect for a group [1].

This approach is particularly powerful in early-phase and translational research, as it provides both a functional analysis demonstrating a causal relationship between an intervention and an outcome, and a comparative analysis of different treatments for a specific subject [1].

N-of-1 vs. Conventional Randomized Trials

The table below summarizes the core distinctions between these two experimental approaches.

| Feature | Parallel-Group RCT | N-of-1 Trial |

|---|---|---|

| Unit of Randomization | Groups of individuals to different treatments [1] | Time periods within a single individual [1] |

| Primary Goal | Estimate the average treatment effect for a population [1] | Identify the optimal treatment for a specific individual [1] |

| Key Strength | High internal validity for group effects [1] | Direct evidence for individual treatment effect, handles HTE [1] |

| Outcome Measurement | Typically few data points per individual [1] | Many frequent, serial measurements over time [1] |

| Ideal For | Establishing generalizable efficacy for a well-defined population [1] | Rare diseases, comorbidities, idiosyncratic responses, personalized treatment optimization [1] |

When to Consider an N-of-1 Design

N-of-1 trials are well-suited for several key scenarios in preliminary research [1]:

- Conditions with Heterogeneous Treatment Effects (HTE): For common conditions like chronic pain, asthma, or certain behavioral problems where no single treatment is universally effective and individual responses vary significantly.

- Rare Diseases: When patient populations are too small to conduct a conventional RCT with sufficient statistical power.

- Patients with Complex Comorbidities: To evaluate treatments for individuals who would typically be excluded from RCTs due to multiple health conditions or polypharmacy.

- Pilot Studies for New Interventions: Serving as a efficient first step to test novel interventions and generate preliminary evidence before investing in large-scale RCTs.

A Framework for Designing N-of-1 Trials

The following diagram illustrates a generalized workflow for conducting an N-of-1 trial. You can use the accompanying DOT script to generate and customize the visual for your own protocols.

A generalized workflow for conducting an N-of-1 trial, highlighting preparation, active cycles, and analysis.

Statistical Analysis of N-of-1 Data

Analyzing data from an N-of-1 trial requires methods that account for serial measurements. The table below outlines the primary approaches.

| Method | Description | Key Consideration |

|---|---|---|

| Graphical Analysis | Visual inspection of outcome data plotted over time, grouped by treatment period [1]. | Serves as a first step; can be sufficient if treatment effects are large and obvious [1]. |

| Time Series Analysis | Statistical models (e.g., autoregression) that account for correlation between successive measurements [1]. | Crucial for valid inference; helps estimate and control for carryover effects [1]. |

| Advanced Analytics | Methods for high-volume data from wearables and frequent monitoring [1]. | Requires specialized statistical expertise; powerful for dense longitudinal data [1]. |

The following diagram maps out the key decision points in selecting an appropriate analytical pathway.

A decision pathway for selecting the appropriate statistical analysis method for N-of-1 trial data.

A Practical Example: Medication Comparison

Suppose a researcher wants to compare two formulations of a drug (Formulation A vs. B) for a chronic condition in a single subject. A possible DOT script to visualize this design could be:

An example N-of-1 trial design comparing two drug formulations with a washout period.

References

FOSL1 Core Properties & Biological Functions

FOSL1, also known as Fra-1, is a protein that plays a critical role in cellular processes. The table below summarizes its core characteristics based on current research [1].

| Property | Description |

|---|---|

| Full Name | FOS Like 1, AP-1 Transcription Factor Subunit [1] |

| Also Known As | Fra-1, FOS-related antigen 1 [1] |

| Molecular Function | Component of the AP-1 (Activator Protein 1) transcription factor complex; regulates gene expression [1] |

| Role in Cancer | Overexpressed in most solid tumors (including glioblastoma); promotes tumor progression, therapy resistance, and poor patient survival [1] |

| Key Cellular Processes | Cell proliferation, invasion, metastasis, Epithelial-Mesenchymal Transition (EMT), cancer stemness, and modulation of tumor immunity [1] |

| Regulatory Mechanisms | Regulation at multiple levels: transcriptional control by STAT3, and post-translational modifications including phosphorylation and deacetylation [1]. |

Key Signaling Pathways & Quantitative Data from Transcriptomics

RNA sequencing studies, particularly in glioblastoma (GBM), have identified specific signaling pathways and genes regulated by FOSL1. The following table consolidates key quantitative findings from these analyses [1].

| Category | Specific Pathways / Genes Identified | Associated Biological Outcomes in GBM |

|---|---|---|

| Upregulated Pathways | NF-κB signaling, Angiogenesis, Vascular mimicry, Ferroptosis [1] | Promoted inflammation, blood vessel formation, and iron-dependent cell death [1] |

| Downregulated Pathways | Wnt/β-catenin signaling, RNA processing [1] | Altered cell fate and gene expression regulation [1] |

| Key Upregulated Genes | ITGA5, SDC1, PHLDB2, TNFRSF8, ADAM8, TLR7, STEAP3, POU3F2 [1] | Linked to cell adhesion, invasion, immune response, and stemness [1] |

| Key Downregulated Genes | IFIT1, FBXO16, ARL3, BEX1 [1] | Implicated in interferon response, protein degradation, and cell differentiation [1] |

Experimental Protocols for Investigation

To investigate FOSL1's role, researchers employ a combination of cellular models, genetic manipulation, and advanced bioinformatics.

In Vitro Cell Culture and Genetic Manipulation

This protocol is used to establish model systems and perturb FOSL1 expression for functional studies [1].

- Cell Lines: Use established human glioblastoma lines (e.g., A172, U87MG) or patient-derived xenograft (PDX) cells cultured in standard media (e.g., DMEM with 10% FBS) [1].

- Plasmid Transfection:

- Grow cells to 50-75% confluency.

- Transfect with a FOSL1-GFP tagged ORF clone (or empty vector control) using a lipofectamine reagent.

- Incubate for 48-72 hours and assess overexpression efficiency via Western blot or immunofluorescence [1].

RNA Sequencing and Transcriptome Analysis

This workflow generates quantitative data on gene expression changes resulting from FOSL1 manipulation [1].

- RNA Isolation: Extract total RNA from transfected and control cells using TRIzol reagent and quantify it [1].

- cDNA Synthesis & qPCR: Reverse transcribe RNA into cDNA. Validate expression of target genes (e.g., from the table above) using quantitative PCR (qPCR) with gene-specific primers and the 2−ΔΔCt method for analysis [1].

- RNA-Seq & Bioinformatic Analysis:

- Prepare cDNA libraries and perform high-throughput sequencing.

- Identify Differentially Expressed Genes (DEGs) between FOSL1-overexpressing and control groups.

- Perform functional enrichment analysis (e.g., GO, KEGG) on the DEGs to identify affected pathways [1].

Integrative Genomic and Pathway Workflow

For a systems-level understanding, the MIGNON workflow integrates transcriptomic and genomic data into mechanistic models of signaling pathways. The following diagram illustrates this process [2].

Diagram of the MIGNON integrative analysis workflow for RNA-Seq data. [2]

FOSL1-Regulated Signaling Network

FOSL1 exerts its effects by influencing a network of key signaling pathways. The diagram below maps these core interactions and regulatory relationships.

Core signaling pathways activated and suppressed by FOSL1, based on transcriptome data. [1]

Interpretation and Future Directions

The data shows that FOSL1 acts as a master regulator, coordinating a shift towards aggressive cancer phenotypes by simultaneously activating pro-tumorigenic pathways and suppressing others [1]. The integrative workflow demonstrates how modern bioinformatics moves beyond simple lists of genes to create mechanistic models that can predict signaling circuit activity and potentially identify new therapeutic vulnerabilities [2].

Given its central role, targeting FOSL1 or its downstream effectors presents a promising strategy. Future experimental work could focus on validating the function of the key genes identified (e.g., ITGA5, STEAP3) and testing the effects of their inhibition in combination with standard therapies.

References

Understanding N-of-1 Trials for Individualized Therapies

An N-of-1 trial in this context refers to a clinical trial designed for a single patient to evaluate a therapy that is itself individualized, meaning it is tailored to target genetic variants unique to that patient or a very small group [1]. This is distinct from traditional N-of-1 trials that might test already-developed drugs in a cross-over design.

This approach is crucial for tackling the "long tail" of ultra-rare genetic diseases, which often affect fewer than 1 in 1,000,000 individuals and are not commercially viable for standard drug development pathways [1]. The table below summarizes the foundational aspects of these trials.

| Aspect | Description |

|---|---|

| Core Challenge | Demonstrating efficacy and safety in a single patient or very small group, without a traditional control group [1]. |

| Therapeutic Modalities | Antisense oligonucleotides (ASOs), siRNA, mRNA, DNA/RNA editing (e.g., CRISPR) [1]. |

| Key Distinction | The drug is developed for and trialed in a single patient ("individualized therapy"), not just a standard drug tested in a single-patient design [1]. |

Key Research Gaps & Methodological Challenges

The design and execution of these trials present unique hurdles. The following table outlines the primary research gaps and methodological challenges that represent current directions for scientific refinement [1].

| Challenge/Gap | Description & Impact |

|---|---|

| Defining Meaningful Change | Establishing a Minimal Clinically Important Difference (MCID) is difficult without population data. A change that is meaningful for an individual patient may not be statistically significant via traditional methods [1]. |

| Selecting Outcome Measures | A lack of pre-validated Clinical Outcome Assessments (COAs) for ultra-rare diseases. Outcomes must be highly individualized to the patient's specific genotype and phenotype [1]. |

| Accounting for Individual Trajectory | High genotype-phenotype heterogeneity means even patients with the same disease can have different symptoms. The trial design cannot assume a uniform clinical course [1]. |

| Ensuring Scientific Rigor | Moving beyond anecdotal evidence requires rigorous, prospective data collection, including comprehensive baseline natural history data for the individual patient [1]. |

A Framework for Rigorous N-of-1 Trial Design

To address these gaps, a structured framework is recommended. The diagram below outlines the core workflow for establishing a scientifically rigorous N-of-1 trial.

Framework for designing a rigorous N-of-1 trial for individualized therapies.

Detailed Methodologies and Considerations

- Comprehensive Baseline Natural History: This involves intensive, prospective data collection on the patient's disease course before treatment begins. The longer this baseline period, the more stable the data, allowing researchers to understand the patient's individual disease trajectory and variability. This individual history serves as the internal control for the trial [1].

- Individualized Clinical Outcome Assessments (COAs): COAs should be selected through in-depth qualitative interviews with patients and caregivers to build a "disease concept model." This ensures the measured outcomes truly reflect the patient's lived experience. The focus should be on [1]:

- Objective/Quantitative Clinical Assessments: Seizure logs, quantitative EEGs, volumetric neuroimaging, wearable biometric sensors (e.g., for gait, sleep, seizures), and neurodevelopmental assessments.

- Qualitative Tools: To capture impacts on quality of life, morbidity, and functioning that are meaningful to the patient.

- Safety and Biomarker Monitoring: Safety protocols must be tailored to the specific therapeutic agent (e.g., ASO, CRISPR) and its known class effects. Monitoring typically includes clinical biomarkers from blood, CSF, imaging, and other tissues to assess both safety and target engagement—confirming that the drug is affecting its intended biological target [1].

Future Directions and Conclusion

The field of individualized N-of-1 trials is nascent and rapidly evolving. Future work will focus on standardizing these proposed frameworks, developing more sophisticated statistical methods for single-case experimental designs, and creating validated, adaptable outcome assessment toolkits. Furthermore, as more of these therapies are developed, regulatory pathways will continue to be refined.

References

Application Notes: Core Sample Preparation Techniques for Analytical Science

Introduction to N-of-1 Principles in Assay Development

References

- 1. N-of-1 Trials, Their Reporting Guidelines, and the ... [hdsr.mitpress.mit.edu]

- 2. Why is Biomarker Assay Validation Different from that of ... [link.springer.com]

- 3. Biochemical cascade [en.wikipedia.org]

- 4. Chromogenic Assays: What they are and how ... [goldbio.com]

- 5. A guideline for reporting experimental protocols in life sciences [pmc.ncbi.nlm.nih.gov]

- 6. Chromogenic detection in western blotting [abcam.com]

A Framework for Creating Application Notes & Protocols

While the specific data for OF-1 is unavailable, the structure below is standard for documenting experimental procedures in pharmaceutical and biological research. You can populate this framework with the relevant details for your compound.

1. Introduction and Purpose This section should briefly describe this compound, its theoretical or known mechanism of action, and the primary objectives of the experimental protocol (e.g., to determine IC50, to assess its effect on a specific signaling pathway, or to evaluate its cytotoxicity).

2. Experimental Design and Workflow A high-level overview of the experimental process is crucial. The following workflow, modeled after established experimental design principles [1], outlines the key stages. You can adapt it to your specific needs for this compound.

3. Detailed Methodologies and Procedures This section should contain the precise, step-by-step instructions that another researcher could follow to replicate your experiment.

- Cell Culture and Treatment: Specify cell lines, culture conditions (media, temperature, CO₂), seeding density, and the protocol for treating cells with this compound (e.g., preparation of stock solutions, serial dilutions, treatment duration).

- Viability and Proliferation Assay (Example): Detail the assay used (e.g., MTT, CellTiter-Glo), including reagent volumes, incubation times, and equipment (e.g., plate reader) with detection wavelengths.

- Signaling Pathway Analysis: If investigating a pathway, describe the method for protein extraction, protein quantification assay, Western blotting antibodies and dilutions, or the kit used for a phospho-kinase array.

- Data Collection: Define the primary output measurements (e.g., absorbance units, luminescence counts, band intensity) and how raw data is collected.

4. Data Analysis and Interpretation Explain how the raw data will be processed and analyzed.

- Calculation of Key Metrics: Describe how values like percentage viability or fold-change over control are calculated.

- Dose-Response Curves: State the software and model (e.g., log(inhibitor) vs. response -- Variable slope (four parameters) in GraphPad Prism) used to determine metrics like IC50.

- Statistical Analysis: List the statistical tests applied (e.g., one-way ANOVA with Dunnett's post-test for multiple comparisons), with the significance threshold defined (e.g., p < 0.05).

Summarizing Quantitative Data

You can use tables to present experimental parameters and results clearly. Here is an example structure for a dose-response experiment.

Table 1: Example Experimental Parameters for this compound Dose-Response

| Factor | Description | Levels / Range | Response Measured |

|---|---|---|---|

| This compound Concentration | Dose of the compound | e.g., 0.1 nM, 1 nM, 10 nM, 100 nM, 1 µM, 10 µM | Cell Viability |

| Incubation Time | Duration of cell exposure | e.g., 24, 48, 72 hours | Cytotoxicity |

| Cell Line | Model system used | e.g., HEK293, HeLa, A549 | Pathway Activation |

References

A Framework for Troubleshooting Inconsistent Results

The guide below provides a systematic approach to diagnose and resolve the problem of inconsistent results in experimental data.

| Troubleshooting Step | Key Actions | Objective & Notes |

|---|---|---|

| 1. Verify Data Integrity & Formulas | Check data sources; Use Excel's "Show Formulas" & "Trace Precedents" to audit calculations [1]. | Rule out errors from incorrect data retrieval or corrupted formulas. |

| 2. Confirm Experimental Parameters | Review lab notebooks, instrument settings, and software version control logs. | Ensure consistency in all physical and digital experimental conditions. |

| 3. Isolate the Inconsistency | Re-run specific data processing scripts or experimental steps in isolation. | Determine if the issue is in data generation (wet-lab) or data analysis (computational). |

| 4. Systematically Document | Record all observations, changes, and outcomes in a dedicated log. | Creates a reliable audit trail and is crucial for identifying intermittent issues [2]. |

Common Root Causes and Solutions

The table below categorizes frequent sources of inconsistency and how to address them.

| Category | Common Causes | Proposed Solutions |

|---|

| Data Input | • Flawed data queries (e.g., random pulldata() failure) [2]

• Incorrect cell references in spreadsheets [1] | • Simplify and test query functions independently.

• Use Excel's error checking to find inconsistent formulas [1]. |

| Experimental Procedure | • Uncalibrated equipment

• Uncontrolled environmental factors (e.g., temperature, humidity) | • Implement strict calibration schedules.

• Standardize and document all sample handling protocols. |

| Sample Handling | • Sample degradation over time

• Inconsistent reagent batches | • Use fresh aliquots and establish sample stability profiles.

• Log and track reagent lot numbers. |

| Software & Analysis | • Non-standardized data processing pipelines

• Updates to analysis software or packages | • Use version-controlled, automated analysis scripts.

• Record all software versions and dependencies in metadata. |

Detailed Experimental Protocols

Protocol 1: Wet-Lab Replication for Signal Pathway Validation

This protocol is designed to confirm key findings, such as the activity in a signaling pathway.

- Cell Culture & Treatment: Plate cells in multiple replicates. Treat with the stimulus/inhibitor, including vehicle controls.

- Sample Collection: Lyse cells at precise time points to capture dynamic signaling events.

- Protein Analysis: Perform Western Blotting for phosphorylated and total protein targets (e.g., AKT, ERK).

- Data Quantification: Normalize band intensities to loading controls.

- Statistical Analysis: Use a t-test or ANOVA to compare treatment groups against controls. Inconsistency may arise from low sample size, cell contamination, or uneven antibody application.

The workflow for this validation protocol can be visualized as follows:

Wet-Lab Experimental Workflow for Pathway Validation

Protocol 2: Computational Validation via Enrichment Analysis

This protocol uses a bioinformatics approach to validate gene sets or pathways identified in your screen [3].

- Input Data Preparation: Start with a ranked list of genes from your OF-1 experiment.

- Run GSEA: Use Gene Set Enrichment Analysis software with a relevant gene set database (e.g., Hallmark, KEGG).

- Build Enrichment Map: Import the GSEA results into Cytoscape with the EnrichmentMap app to visualize redundant gene sets as a network [3].

- Interpret Results: In the Enrichment Map, clusters of nodes represent biological themes. A stable, significant result will appear as a well-defined cluster.

The following diagram outlines this computational process:

Computational Validation via Enrichment Analysis

FAQ: Addressing Specific Inconsistencies

- How can I prevent data retrieval functions from returning inconsistent results?

Functions like

pulldata()in Survey123 can fail if queries execute in a random order [2]. Solution: Simplify the query, avoid complex functions likeconcatwithin the query call, and ensure the data source itself does not contain problematic characters (like apostrophes) in key fields [2]. - My spreadsheet flags a formula as inconsistent, but it looks correct. What should I do?

In Excel, you can choose to Ignore Error if the inconsistency is intentional [1]. To permanently disable these warnings, go to

File > Options > Formulasand uncheck "Formulas inconsistent with other formulas in the region" [1]. - What is the most overlooked cause of inconsistency in biological replicates? Minor environmental fluctuations are often underestimated. Seemingly small changes in temperature, humidity, or the time of day when an assay is performed can significantly impact biological systems and lead to variable results.

References

Proposed Framework for Your Technical Support Center

Here is a model structure you can adapt for troubleshooting OF-1 concentration optimization, illustrated with an example from proton MR spectroscopy.

This diagram outlines a logical troubleshooting workflow for detecting low-concentration compounds, inspired by methods in MR spectroscopy [1].

Example FAQ: Detecting Low-Concentration Analytes

Here's an example of how you can structure a specific troubleshooting entry.

Q: Despite using a sensitive assay, I cannot reliably detect my target analyte in biological samples. What steps can I take to improve detection?

- Problem: Inability to detect a low-concentration analyte, leading to a poor signal-to-noise ratio.

- Primary Cause: The fundamental concentration of the analyte may be below the detection limit of the current method. Furthermore, the sample matrix or acquisition parameters might be suboptimal [1].

- Solution: Implement a combination of targeted pulse sequences and large-volume acquisition.

- Methodology Optimization: Replace standard pulses with spectrally selective excitation pulses (e.g., a low bandwidth-time product sinc pulse) to minimize noise and avoid exciting interfering signals [1].

- Acquisition Parameters: Use large-volume acquisition to increase the total signal. Employ single-slice selective pulse localization to achieve a shorter Echo Time (TE), which helps in preserving the signal from rapidly decaying compounds [1].

- Signal Processing: Apply post-processing techniques like water sideband removal and frequency-based channel alignment to narrow spectral linewidths and reduce artifacts [1].

Template for Quantitative Data Summary

You can use the following table structure to summarize key performance metrics of different optimization methods. The data below is an example from a different field (an ELISA kit) to illustrate the format.

| Parameter | Value | Sample Type | Notes |

|---|---|---|---|

| Sensitivity | 9.6 pg/mL | N/A | Lower detection limit [2] |

| Assay Range | 25 - 1600 pg/mL | N/A | Linear range of the standard curve [2] |

| Intra-assay CV | 2.4% | Serum | Measure of precision within a single run [2] |

| Inter-assay CV | 9.6% | Serum | Measure of precision across different runs [2] |

| Mean Recovery | 86.56 pg/mL | Human Serum (Donors) | Accuracy of measuring the analyte in a complex matrix [2] |

References

Understanding 1/f Noise and the CCS Technique

1/f noise (flicker noise) is a low-frequency phenomenon that can significantly impact the sensitivity of electronic circuits, especially those involved in low-frequency signal processing or that use frequency down-conversion [1]. It's a primary concern for the accuracy of experimental data.

The Complementary Cascode Switching (CCS) technique is a hardware-based method designed to mitigate this noise. The core principle involves using two identical MOSFET transistors (M11 and M12) that are switched with complementary 50% duty cycle clocks. This switching action, combined with a cascode structure that presents a low-impedance node to the drains, disrupts the flicker noise generation process, pushing the noise to higher harmonics of the switching frequency where it can be filtered out [1].

The diagram below illustrates the three key circuit configurations analyzed in the study:

Experimental Protocol & Performance Data

This section provides the methodology for implementing the CCS technique and a summary of its quantitative performance as reported in the source research [1].

Experimental Protocol: Implementing the CCS Technique

- Circuit Fabrication: The receiver circuit, including the CCS-based OOK detector, matching circuitry, limiter, and All-Digital Clock and Data Recovery (ADCDR) unit, is realized in a 65 nm CMOS LPE technology.

- Clock Signal Setup:

- Generate two complementary clock signals with a 1 MHz switching frequency and a 50% duty cycle.

- It is critical to minimize the overlap between these two signals. Use de-skewing circuits to ensure one switch turns off before the other turns on.

- Biasing: Apply the constant bias voltage to the GATE terminal when the switches are in the "ON" state.

- Measurement & Validation:

- Use a spectrum analyzer to measure the drain current noise across the frequency band of interest (e.g., up to 100 kHz).

- Compare the noise profile against a statically-biased reference circuit to quantify the reduction in 1/f noise.

Performance Metrics of the 120 GHz Receiver using CCS [1]

| Parameter | Measured Value | Notes / Context |

|---|---|---|

| 1/f Noise Reduction | 9 dB | Reduction in drain current noise at frequencies up to 100 kHz. |

| Power Consumption | 52 µW | Total for the receiver chain (OOK detector: 2 µW, Limiter: 40 µW, ADCDR: 58 µW). |

| Energy Efficiency | 5.2 pJ/bit | - |

| Data Rate | 100 kb/s | - |

| Receiver Sensitivity | -46 dBm | - |

| Technology Node | 65 nm CMOS LPE | GlobalFoundries technology. |

| On-Chip Area | 0.56 mm² | - |

Key Configuration Findings [1]

- Duty Cycle: A 50% duty cycle was found to be optimal for achieving the maximum 9 dB noise reduction.

- Clock Overlap: A small, controlled clock overlap (simulations showed up to 50 ns) can yield an additional ~1 dB reduction, but must be carefully managed.

- Cascode Transistors: The width-to-length (W/L) ratio of the cascode transistors significantly impacts performance. Keeping the WL product constant while adjusting the ratio was key to achieving the reported noise reduction.

Troubleshooting Guide & FAQs

Frequently Asked Questions

Q1: Why is my circuit not achieving the full 9 dB of noise reduction?

- A1: The most common reasons are a deviation from the 50% clock duty cycle, excessive overlap between the complementary clock signals, or suboptimal sizing of the main or cascode transistors. Verify your clock characteristics and layout parameters against the protocol.

Q2: Can the CCS technique be applied to any low-frequency circuit?

- A2: The technique is particularly well-suited for circuits that are not processing continuous signals, such as oscillators and the OOK detector described. It may not be directly applicable to circuits that are always on, like a standard operational amplifier [1].

Q3: How does the 1/f noise reduction impact overall receiver performance?

- A3: By lowering the 1/f noise corner frequency, the CCS technique improves the sensitivity of the receiver in the low-frequency baseband, which is crucial for accurately detecting and decoding modulated signals, especially at low data rates [1].

Troubleshooting Common Problems

| Problem | Possible Cause | Solution |

|---|---|---|

| Insufficient noise reduction (<9 dB) | Non-50% clock duty cycle; Significant clock signal overlap. | Use a precision clock generator; Implement de-skewing circuits. |

| Signal distortion at output | Transistors operating in non-saturation region when ON. | Check drain-source voltage; Adjust bias point to ensure saturation. |

| High power consumption | Incorrect sizing of transistors; Switching losses. | Re-simulate and optimize transistor dimensions (W/L) for power. |

Theoretical Background and Workflow

The CCS technique works by fundamentally altering the noise characteristics of the transistor. Switching the gate bias between strong inversion and accumulation states reduces the trapping/detrapping events that cause 1/f noise. The cascode structure and complementary switching then modulate this reduced noise, shifting its power to higher frequencies (harmonics of the 1 MHz switch frequency) where it is less harmful to the baseband signal [1].

The following flowchart summarizes the overall experimental workflow from concept to validation:

References

troubleshooting OF-1 assay failure

Common Assay Problems & Solutions

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Weak or No Signal [1] [2] | Degraded reagents; incorrect incubation; insufficient antibody; bad standard [2]. | Use fresh reagents; check standard handling [2]; optimize incubation time/temperature [1]; increase antibody concentration [2]. |

| High Background [1] [2] | Inadequate washing; insufficient blocking; nonspecific antibody binding [1]. | Increase wash number/stringency [2]; optimize blocking buffer (e.g., BSA, casein) [1]; check detector antibody performance [3]. |

| Poor Reproducibility [1] [2] | Non-standardized pipetting; reagent lot variability; uneven coating or incubation [1]. | Strict SOPs; calibrate pipettes; consistent reagent lots; ensure even coating/incubation [1]; check plate sealers [2]. |

| Poor Duplicates [2] | Inconsistent pipetting; cross-contamination; uneven plate coating. | Change tips between samples; ensure sufficient washing; check coating procedure and plate quality [2]. |

| Matrix Interference [1] [4] | Sample components (e.g., plasma, serum) interfere with detection. | Dilute samples; use matched matrix for standards; perform spike-and-recovery experiments [1] [4]. |

Systematic Troubleshooting Workflow

When users encounter a problem, guiding them through a logical investigation process is crucial. The following flowchart outlines a general strategy that can be adapted for various assay types.

This workflow encourages a methodical approach rather than random guessing. Key steps involve:

- Defining the Problem: Precisely identify the symptom (e.g., high background, weak signal) and review the exact experimental steps performed [5].

- Formulating & Testing Hypotheses: Based on the symptoms, hypothesize a root cause (e.g., "The blocking was insufficient") and design a small, controlled experiment to test it [5].

- Identifying Root Cause: If the initial hypothesis is incorrect, use tools like the 5 Whys or a Fishbone Diagram to explore deeper underlying factors, such as training gaps, reagent instability, or equipment malfunctions [5].

Regulatory Considerations for OOS Investigations

For professionals in drug development, handling assay failures often has regulatory implications. A key part of the formal process is the Out-of-Specification (OOS) investigation [6] [7] [5].

The FDA mandates a two-phase approach for OOS investigations [6]:

- Phase I: An initial assessment of the accuracy of the data. This includes checking for obvious analytical errors, reviewing laboratory calculations, and ensuring instrument calibration.

- Phase II: A full-scale, thorough investigation if no error is found in Phase I. This must include a review of manufacturing and production processes, and expand to assess the impact on other batches or products [6] [7].

A common regulatory citation is the failure to extend investigations to other potentially affected batches, which was a key issue in recent FDA warning letters [6] [7]. A robust Corrective and Preventive Action (CAPA) plan is required to close the investigation and prevent recurrence [6] [5].

Proactive Practices for Assay Reliability

Preventing failures is more efficient than troubleshooting them. Here are some best practices to emphasize in your guides:

- Validate with Real Samples Early: Transition from spiked buffers to real, representative samples as early as possible in development and validation. Real samples can reveal issues with matrix interference and cross-reactivity that buffers alone cannot [8] [4].

- Ensure Robust OOS Procedures: Implement clear, written procedures for OOS investigations. Ensure they define how to determine the scope of the investigation and require a thorough root cause analysis before closing a case [7] [5].

- Control Reagents and Equipment: Manage reagent lot-to-lot variability by testing new lots before full implementation [8]. Maintain equipment according to a strict schedule, as defects (e.g., scratched manufacturing surfaces) can be a root cause of failures and regulatory observations [7].

References

- 1. Common Problems in Assay Development and How to Solve ... [protocolsandsolutions.com]

- 2. Troubleshooting Guide: ELISA [rndsystems.com]

- 3. Troubleshooting common problems during ELISA ... [mybiosource.com]

- 4. How to select the right ELISA kit - Biomedica [bmgrp.com]

- 5. Deviation and OOS Investigations in Pharmaceutical ... [qualityexecutivepartners.com]

- 6. FDA Warning Letter Highlights OOS Handling and Stability ... [gmp-compliance.org]

- 7. Scientific Protein Laboratories, LLC - 712578 - 10/10/2025 [fda.gov]

- 8. Common Assay Development Issues (And How to Avoid ... [dcndx.com]

A Systematic Approach to Troubleshooting Low SNR

The core of effective troubleshooting is a structured method to isolate and identify problems. The following steps, adapted from general laboratory best practices, can be applied to SNR issues [1].

| Step | Description | Key Actions |

|---|---|---|

| 1. Identify Problem | Clearly define the symptom without assuming the cause. | "The signal from my OF-1 assay is weak and indistinguishable from background noise." |

| 2. List Explanations | Brainstorm all potential causes for the low SNR. | List obvious (e.g., low probe concentration, high background) and non-obvious (e.g., instrument calibration, light source decay) causes. |

| 3. Collect Data | Gather information to test your list of explanations. | Review controls; check reagent storage/expiry; verify instrument settings and maintenance logs; review experimental procedure. |

| 4. Eliminate Explanations | Rule out causes based on the data you collected. | If positive controls worked, eliminate the core assay chemistry. If reagents are new and stored correctly, eliminate them as a primary cause. |

| 5. Experiment | Design targeted tests for remaining potential causes. | Systematically vary one parameter at a time (e.g., probe concentration, exposure time) to find the variable that improves SNR. |

| 6. Identify Cause | Pinpoint the root cause from your experimental results. | Conclude, for example, that "the camera exposure time was 50% below the optimal value for this assay's signal intensity." |

Experimental Protocol: A Framework for SNR Optimization

This protocol is inspired by a 2025 framework for enhancing SNR in quantitative fluorescence microscopy. The core equation it is based on treats different noise sources as independent, where the total noise variance is the sum of the variances from each source [2]: σ²_total = σ²_photon + σ²_dark + σ²_CIC + σ²_read

You can adapt its principles to other signal-based detection systems in drug development. The following workflow diagrams the optimization process.

Objective

To systematically identify the sources of noise in a detection system and implement corrective measures to maximize the Signal-to-Noise Ratio (SNR).

Materials

- Your detection instrument (e.g., plate reader, microscope, HTS scanner).

- Assay reagents, including your this compound probe and buffers.

- Additional optical filters (if applicable), such as secondary emission or excitation filters [2].

Methodology

The table below details the key experimental steps and their rationales.

| Step | Action | Rationale & Technical Details |

|---|---|---|

| 1. Instrument Check | Measure baseline noise with no signal. Close light source shutter, set exposure time to zero, and disable any signal gain [2]. | Isolates and measures the system's readout noise. This is the electronic noise inherent to the sensor when reading the signal. |

| 2. Background Reduction | (a) Add secondary emission and excitation filters specific to your this compound probe's spectrum [2].

(b) Introduce a "wait in the dark" period before signal acquisition if photobleaching is a concern. | (a) Filters block stray light and non-specific wavelengths that contribute to background photon shot noise.

(b) Allows transient background fluorescence to decay. |

| 3. Signal Acquisition | Systematically increase the signal acquisition time (e.g., exposure time on a camera, integration time on a reader) while monitoring the SNR. | Maximizes the desired signal photons. The signal increases linearly with time, while photon shot noise increases as the square root, leading to a net gain in SNR. |

| 4. Data Analysis | Calculate SNR after each modification: SNR = (Mean Signal - Mean Background) / Standard Deviation of Background. | Quantifies the improvement from each optimization step. Compare results against your assay's required threshold for reliable detection. |

Frequently Asked Questions (FAQs)

Q1: My positive control works, but my experimental samples have terrible SNR. What should I do? This suggests your assay fundamentals are sound, but something in your sample is interfering. Focus your troubleshooting on background reduction. Common culprits are auto-fluorescence from sample components, non-specific binding of your probe, or contaminants in your buffers. Implementing the background reduction steps from the protocol above is your best first action [2] [1].

Q2: I've optimized everything, but my SNR is still low. Is my equipment failing? It is a possibility. The protocol's first step on verifying instrument parameters is crucial. Sensors can degrade over time, light sources (like lamps or LEDs) lose intensity, and optical pathways can become misaligned. Consult your instrument's maintenance guide and, if possible, run performance validation tests using manufacturer-supplied standards.

Q3: How can I present my SNR data clearly in a report or publication? For quantitative data, a simple table is often most effective for comparison. For example:

| Optimization Step | Mean Signal | Mean Background | Background SD | SNR |

|---|---|---|---|---|

| Initial Conditions | 1050 | 180 | 45 | 19.3 |

| After Adding Filters | 980 | 95 | 22 | 40.2 |

| After Optimizing Exposure | 1650 | 100 | 25 | 62.0 |

Key Takeaways for Your Technical Center

- Adopt a Structured Method: A systematic troubleshooting framework prevents wasted effort and helps you conclusively identify root causes [1].

- Quantify Everything: The generic SNR formula (

SNR = (Signal - Background) / SD_Background) is your most important metric for making objective decisions. - Focus on Background: Often, the most effective way to improve SNR is not by amplifying a weak signal, but by aggressively reducing all sources of noise [2].

References

OF-1 Protocol Optimization & Troubleshooting Guide

This section provides core optimization settings and a step-by-step troubleshooting flowchart for common OF-1 protocol issues.

This compound Protocol: Recommended Baseline Parameters The following table summarizes key parameters for initial optimization. Adjust these values based on your specific experimental conditions and network environment [1].

| Parameter | Function | Recommended Baseline | Adjustable Range |

|---|---|---|---|

| MTU (Maximum Transmission Unit) | Optimizes data packet size for your network path [1]. | 1500 bytes | 1280 - 1500 bytes |

| TCP Window Size | Controls the amount of data in transit before acknowledgment is required [1]. | 64 KB | 16 KB - 256 KB |

| Maximum Retransmission Timeout (RTO) | Sets the wait time for a packet acknowledgment before resending. | 1 second | 200 ms - 3 seconds |

| Initial Congestion Window (CWND) | Limits data sent at the start of a connection to avoid network overload. | 10 segments | 8 - 16 segments |

Troubleshooting Workflow The diagram below outlines a logical workflow for diagnosing frequent this compound protocol issues like connection timeouts and data corruption. This workflow is adapted from general protocol troubleshooting methodologies, including principles for addressing HTTP/2 and HTTP/3 errors [2].

This troubleshooting chart provides a starting point for diagnosing this compound issues. Follow the paths based on the specific error you encounter [1] [2].

Frequently Asked Questions (FAQs)

Q1: What are the primary configuration parameters to adjust for improving this compound data transfer speeds over high-latency satellite links? The most critical parameters are the TCP Window Size and Maximum Retransmission Timeout (RTO). Increase the TCP Window Size to allow more data to be "in flight" before waiting for acknowledgment. Adjust the RTO to be longer than the measured Round-Trip Time (RTT) to prevent unnecessary retransmissions. Fine-tune these parameters based on the specific bandwidth-delay product of your satellite link [1].

Q2: During an this compound experiment, we encounter sporadic checksum errors. What is the most effective diagnostic approach? Follow a systematic isolation process:

- Validate Data at Source: Ensure the data generation software or instrument is functioning correctly and not producing corrupt data.

- Check Configuration: Verify the this compound protocol's built-in error detection and correction settings are enabled and correctly configured [1].

- Inspect Hardware: Run diagnostics on network interface cards (NICs), switch ports, and cabling. Physical layer issues like faulty cables are a common cause of non-deterministic corruption [1].

- Use a Packet Analyzer: Employ a tool like Wireshark to capture traffic. Analyze the packets to determine if corruption is happening at the this compound protocol level or at a lower layer (e.g., Ethernet) [2].

Q3: How can we effectively simulate this compound protocol conditions to test our system's robustness before live experiments? Utilize network emulation tools that allow you to replicate real-world conditions. Key parameters to simulate include:

- Bandwidth Limitations: Throttle your connection to the expected bandwidth.

- Latency and Jitter: Introduce delays that mimic your target network (e.g., cross-continental or satellite links).

- Packet Loss and Corruption: Simulate random or burst packet loss to test the protocol's error recovery mechanisms [1].

Advanced Optimization Methodology

For researchers requiring peak performance, these advanced techniques can yield significant improvements.

Advanced Optimization Parameters for High-Performance Computing (HPC) Environments The following settings are aggressive and should be tested in a controlled lab environment before deployment in production.

| Parameter | Function | Aggressive Setting | Test Command / Tool |

|---|---|---|---|

| Selective Acknowledgment (SACK) | Allows receiver to inform sender about all segments received correctly, enabling faster retransmission of only missing data. | Enable | sysctl -w net.ipv4.tcp_sack=1 |

| TCP Fast Open (TFO) | Reduces latency by allowing data transfer during the initial TCP handshake. | Enable | sysctl -w net.ipv4.tcp_fastopen=3 |

| Explicit Congestion Notification (ECN) | Allows end-to-end notification of network congestion without dropping packets. | Enable | sysctl -w net.ipv4.tcp_ecn=1 |

| UDP for Time-Sensitive Data | For data streams where latency is more critical than 100% accuracy, consider configuring this compound to use UDP instead of TCP. | Protocol Switch | Configure within this compound application settings |

Experimental Protocol Comparison Workflow Use this Graphviz diagram to guide your decision-making process when selecting and optimizing network protocols for different types of experiments.

A Note on This Resource

This technical support center is built upon established principles of network optimization and protocol management [1] [2]. To make it fully specific for your team, please consult your this compound protocol's official vendor documentation and integrate any detailed parameter definitions or troubleshooting codes found therein.

References

Troubleshooting Common N-of-1 Trial Challenges

The table below outlines frequent problems, their likely causes, and evidence-supported solutions.

| Problem | Common Causes & Diagnosis | Solutions & Best Practices |

|---|---|---|

| High Data Variability | Underlying condition is not stable (e.g., episodic symptoms); outcome measures are subjective or poorly defined [1]. | Select conditions that are chronic and stable. Use objective, patient-relevant outcomes and validate tools beforehand [1] [2]. |

| Treatment Carryover Effects | Short or absent washout periods; treatment has a long half-life or prolonged effect [1] [2]. | Incorporate and optimize washout periods between treatment blocks. Choose treatments with rapid onset/offset [1] [2]. |

| Participant Burden & Dropout | Trial is too long/complex; frequent data collection is intrusive [1]. | Simplify design; use remote monitoring devices to reduce burden. Engage participant in design planning [1] [3]. |

| Unclear Data Interpretation | No pre-specified analysis plan; visual analysis of data is ambiguous [2]. | Pre-define statistical analysis; use established guidelines (CENT) for analysis/reporting [2] [3]. |

| Ethical & Logistical Hurdles | Lack of clear protocol for IRB/ethics review; drug sourcing for off-label use [1]. | Use SPENT guidelines for robust protocol development. Engage regulators early for off-label/investigational drugs [1] [3]. |

A Systematic Workflow for Problem-Solving

For any issue that arises, following a structured troubleshooting methodology is key. The flowchart below visualizes a generalized process adapted from IT and business problem-solving, which is highly applicable to research challenges [4] [5]. This helps in moving from symptom to root cause in a logical manner.

The diagram outlines a core iterative cycle: if your hypothesis is rejected at the testing stage, or the solution fails verification, you return to the "Gather Information" or "Establish Hypothesis" steps to refine your approach [4] [5].

Proven Techniques for Complex Problems

When the cause of a problem isn't obvious, integrating these formal techniques into the "Gather Information" and "Establish Hypothesis" stages can be highly effective:

- The Five Whys: Repeatedly ask "Why?" to move past surface-level symptoms to the root cause. For example: Why is the data missing? → The app crashed. → Why did the app crash? → The participant entered data in an unexpected format. [5]

- Brainstorming: Generate a wide list of potential causes without judgment. This is particularly useful when a problem has many possible contributors and you need to avoid anchoring on the first obvious one [5].

- Process Mapping: Create a visual map of your trial workflow (e.g., from participant consent to data collection to analysis). This can reveal bottlenecks, unnecessary complexities, or points of failure that are not apparent when looking at the protocol text alone [5].

References

- 1. The n-of-1 clinical trial: the ultimate strategy for ... [pmc.ncbi.nlm.nih.gov]

- 2. Protocol for a systematic review of N-of-1 trial protocol ... [systematicreviewsjournal.biomedcentral.com]

- 3. N-of-1 Trials, Their Reporting Guidelines, and the ... [hdsr.mitpress.mit.edu]

- 4. Steps: Your Friendly Guide to Problem Solving... Troubleshooting [onlinetoolguides.com]

- 5. From Troubleshooting IT Issues To Solving Business Problems... [itjones.com]

Frameworks for Gold Standard Validation

| Framework / Context | Core Purpose | Key Validation Steps / Metrics | Applicable Contexts |

|---|---|---|---|

| Comparative Judgement (CJ) [1] | Establish a consensus-driven benchmark for calibration. | Compares results to a "gold standard" reference set; measures alignment, bias, and reliability [1]. | AI system calibration, educational assessment, regulatory review of high-stakes exams [1]. |

| Immunohistochemistry (IHC) Assays [2] | Ensure lab-developed or FDA-approved tests perform as expected. | Validation (for lab-developed tests): Extensive testing to confirm performance. Verification (for FDA-approved tests): Confirming manufacturer's claims [2]. | Laboratory medicine, test development for diagnostic, prognostic, or predictive markers (e.g., HER2 testing in breast cancer) [2]. | | N-of-1 Trials [3] | Determine the optimal treatment for a single individual. | Multiple crossover trials within one person; uses patient-focused outcomes and rigorous design (SPENT/CENT guidelines) for validation of individual response [3]. | Personalized medicine, rare diseases, chronic conditions, pediatrics [3]. |

Experimental Protocol for Test Validation

The validation process for IHC assays provides a clear, structured example of how a new method is validated against a gold standard [2]. The workflow can be summarized as follows, with the detailed DOT script provided if you need to recreate the diagram:

Experimental Validation Workflow

Detailed Protocol Steps

- Pre-Validation Investigation: Before starting, a thorough investigation is required. This includes a literature review, selecting the appropriate antibody clone, confirming clinical utility with pathologists, and ensuring resources (tissue samples, controls) are available [2].

- Assay Optimization: For laboratory-developed tests, this involves testing the assay on a tissue with known target antigen expression and iteratively adjusting conditions (e.g., dilution, incubation) until an optimal staining pattern is achieved [2].

- Define Validation Plan: Determine the number of known positive and negative cases. Predictive markers typically require more cases (e.g., 20 positive and 20 negative) than non-predictive markers (e.g., 10 of each) [2].

- Identify Cases: Select cases with a range of expression levels. The "gold standard" for these cases can be prior stain results using a validated method, a non-IHC method (like ISH or molecular testing), or a tissue type defined by specific protein expression [2].

- Stain, Interpret, and Analyze: Stain all validation cases and interpret the results. Analysis typically aims for a 90% overall concordance threshold with the gold standard. Discordant cases should be scrutinized, as patterns can reveal if the issue is with sensitivity or specificity [2].

- Ongoing Monitoring: After clinical implementation, the assay must be maintained through lot-to-lot comparisons, tracking positive/negative rates, and enrollment in proficiency testing programs to detect performance "drift" [2].

References

comparing OF-1 to alternative compounds

A Framework for Your Comparison Guide

Once you gather the data, you can structure your guide using the following outline. The table below suggests how to organize quantitative data for easy comparison.

Table 1: Key Properties for Comparative Analysis

| Property | OF-1 | Alternative A | Alternative B | Experimental Context (e.g., Assay Type, Cell Line) |

|---|---|---|---|---|

| Potency (e.g., IC50 in nM) | ||||

| Efficacy (e.g., % Max Response) | ||||

| Selectivity Index | ||||

| Solubility | ||||

| Metabolic Stability | ||||

| Key Reference |

Visualizing Experimental Workflows

For the experimental data you cite, you can use Graphviz to create clear workflow diagrams. Below is a generic template for a high-throughput screening (HTS) workflow, a common method in drug discovery. You can adapt it to fit the specific experiments you reference.

Diagram: High-Throughput Screening Workflow [1]

This diagram outlines a logical sequence for a screening assay. When you have details on the specific experiments used to compare this compound, you can replace these generic steps with precise ones (e.g., "ATP-based cell viability assay" or "GPCR beta-arrestin recruitment assay").

References

N-of-1 Trials vs. Standard Treatments: A Conceptual Comparison

The table below summarizes the core differences between these two approaches. It's important to understand that they serve different primary purposes: standard treatments aim to establish average efficacy for a population, while N-of-1 trials seek to determine optimal effectiveness for an individual [1] [2].

| Feature | N-of-1 Trials | Standard Treatments (via RCTs) |

|---|---|---|

| Primary Unit of Study | Single patient [1] [2] | Patient population [3] |

| Core Objective | Find the best treatment for an individual patient [2] | Establish the average efficacy of a treatment for a group [4] |

| Control | Patient acts as their own control (e.g., through crossover with placebo/other drugs) [2] | Concurrent control group (placebo or standard therapy) [4] |

| Key Outcome | Personalized treatment decision | Generalizable treatment guideline or approval |

| Ideal Application | Chronic conditions with stable symptoms; optimizing therapy when multiple options exist [2] | Establishing initial drug safety & efficacy; diseases with predictable, uniform progression |

| Generalizability | Low for populations, high for the individual studied [2] | High for the target population (though see "Efficacy-Effectiveness Gap" below) [3] |

The fundamental distinction lies in their perspective. Standard treatments are derived from a "drug-centric" model, whereas N-of-1 trials represent a "patient-centric" model [1]. The diagram below illustrates this core philosophical and methodological difference.

The Real-World Performance Gap of Standard Treatments

While RCTs are the gold standard for regulatory approval, their tightly controlled "efficacy" does not always translate perfectly to real-world "effectiveness". A 2024 population-based cohort study directly quantified this "efficacy-effectiveness gap" for multiple myeloma treatments [3].

The study analyzed 3,951 real-world (RW) patients and 2,476 patients from RCTs across seven standard-of-care regimens. Key findings are summarized below:

| Outcome Measure | Real-World vs. RCT Results (Pooled Hazard Ratio) | Interpretation |

|---|---|---|

| Progression-Free Survival (PFS) | HR = 1.51 (95% CI: 1.03-2.21), p=0.034 [3] | RW patients had a 51% higher risk of progression or death compared to RCT patients. |

| Overall Survival (OS) | HR = 1.76 (95% CI: 1.31-2.36), p<0.001 [3] | RW patients had a 76% higher risk of death compared to RCT patients. |

This gap is attributed to several factors [3]:

- Patient Selection: RCTs use strict inclusion criteria, often excluding older patients and those with more comorbidities or aggressive disease.

- Treatment Adherence & Management: RCTs enforce strict protocols with close monitoring, which may be harder to maintain in routine practice.

N-of-1 Trial Protocols and Applications

An N-of-1 trial is a structured, single-subject study in which a patient is the sole unit of observation. The goal is to determine the best intervention for that individual using objective, data-driven criteria [2].

Core Experimental Protocol

The methodology can be adapted but generally follows this workflow, which you can visualize in the diagram below.

Key Methodological Details:

- Randomization & Blinding: The order in which treatments (e.g., drug A, drug B, or placebo) are administered is randomized. The patient and clinician are often blinded to the sequence to reduce bias [2].

- Washout Periods: A key element is the inclusion of washout periods between treatments to prevent "carryover" effects from one therapy to the next [2].

- Outcome Measurement: The chosen outcome (e.g., pain score, fatigue level, tumor marker) must be measurable repeatedly and be responsive to treatment changes. The use of modern wireless medical monitoring devices can greatly facilitate remote and objective data collection [2].

Case Studies in Oncology Drug Development

N-of-1 designs have been used to fast-track development in precision oncology [1]:

- Selpercatinib (LOXO-292): Two patients with RET-altered cancers were treated via single-patient protocols. Real-time, intrapatient dose escalation was guided by pharmacokinetics, establishing a tolerated dose of 160 mg twice daily—the same dose later confirmed in large-scale trials and approved by the FDA [1].

- Selitrectinib (LOXO-195): This second-generation NTRK inhibitor was administered to two patients who developed resistance to first-generation TRK inhibitors. The N-of-1 trial provided the first evidence of its clinical activity, guiding further development [1].

A Collaborative, Not Competitive, Relationship

For researchers and drug developers, N-of-1 trials and standard RCTs are best viewed as complementary tools.

- Use N-of-1 trials to rapidly explore therapeutic options for individual patients with rare mutations, identify resistance mechanisms, and guide personalized dosing strategies [1].

- Rely on standard RCTs to establish the average treatment effect for a population, which remains the foundation for regulatory drug approval and the creation of public treatment guidelines [3] [4].

The regulatory landscape is also evolving to support more flexible designs. The FDA's recent final guidance on ICH E6(R3) Good Clinical Practice introduces more flexible, risk-based approaches and embraces modern innovations in trial design [5].

References

- 1. N-of-1 Trials in Cancer Drug Development - PMC [pmc.ncbi.nlm.nih.gov]

- 2. The n-of-1 clinical trial: the ultimate strategy for ... [pmc.ncbi.nlm.nih.gov]

- 3. Comparing the clinical trial efficacy versus real-world ... [pmc.ncbi.nlm.nih.gov]

- 4. Standard treatment [en.wikipedia.org]

- 5. Regulatory Updates, September 2025 [caidya.com]

cross-laboratory validation of OF-1 protocols

An Example of Cross-Laboratory Validation

A 2025 study provides a clear model for cross-laboratory validation in nanomaterials research. The workflow and key findings are summarized below [1].

Experimental Workflow for Cross-Lab Validation [1]

Key Experimental Data and Outcomes [1]

| Aspect | Description |

|---|---|

| Objective | Predict successful synthesis of copper nanoclusters (CuNCs) and provide mechanistic insights. |

| Methodology | Remotely programmed, automated robotic synthesis using identical protocols and multiple liquid handlers/spectrometers across two independent facilities. |

| Data for ML | 40 initial training samples; validation with 6 never-seen-before samples. |

| Key Outcome | Successfully demonstrated reproducible synthesis and ML predictions across different laboratories and instruments, eliminating operator and instrument-specific variability. |

Guidelines for Rigorous Protocol Design

For structuring your comparison guide, the Standard Protocol Items: Recommendations for Interventional Trials (SPIRIT) guideline is a key framework. A planned extension for N-of-1 trials (SPENT) highlights that careful protocol development is critical for feasibility and research value. Key considerations for the protocol include the types of conditions and treatments being evaluated, specific design constituents like treatment period length, and opportunities for participant contribution during the design process [2].

A Note on Validation of Qualitative vs. Quantitative Methods

When planning validation studies, it's important to distinguish between quantitative and qualitative methods. According to clinical laboratory guidelines, their performance is characterized differently [3]:

- Quantitative Tests: Validation involves establishing a reportable range, precision, and trueness (bias) through experiments like replication and comparison of methods.

- Qualitative Tests (Binary Output): Validation focuses on different metrics, primarily clinical agreement (characterized by Percent Positive Agreement and Percent Negative Agreement) and the cutoff interval (which defines the uncertainty around the binary decision point) [3].

References

OF-1 comparative effectiveness research

What are n-of-1 Trials & CER?

- Comparative Effectiveness Research (CER) is "the generation and synthesis of evidence that compares the benefits and harms of alternative methods to prevent, diagnose, treat, and monitor a clinical condition or to improve the delivery of care" [1]. Its core question is which treatment works best, for whom, and under what circumstances [2].

- Single-Patient (n-of-1) Trials are multiple-period crossover experiments conducted within a single patient to compare the effects of two or more treatments [3]. They are a pragmatic methodology for patient-centered comparative effectiveness research, allowing for the direct estimation of individual treatment effects [4].

When to Use an n-of-1 Trial

n-of-1 trials are not suitable for every condition or treatment. The table below outlines the key indications and contraindications.

| Feature | Description | Indication | Contraindication |

|---|---|---|---|

| Heterogeneity of Treatment Effects | Treatment effect varies across patients [4]. | HTE makes evidence for a specific patient essential (e.g., SSRIs for depression) [4]. | Homogeneity of treatment effects (e.g., insulin for blood glucose reduction) [4]. |

| Chronicity | Involves long-term treatment for a chronic condition [4]. | Chronicity allows trial knowledge to inform future decisions (e.g., GERD) [4]. | Acute conditions (e.g., influenza) or one-time treatments (e.g., surgery) [4]. |

| Stability | The treatment effect is stable over time [4]. | Stable effect ensures knowledge is applicable in the future [4]. | Unstable treatment effect (e.g., warfarin effects with fluctuating vitamin K intake) [4]. |

| Effect Onset & Carryover | The time for a treatment's effect to start and dissipate [4]. | Quick onset and minimal carryover (e.g., short-acting stimulants for ADHD) [4]. | Long onset and/or carryover effects (e.g., long-acting medications) [4]. |

| Lack of Adequate Evidence | Existing evidence is insufficient to inform a treatment decision [4]. | Lack of evidence creates the need for an n-of-1 trial [4]. | Adequate evidence already exists; no need for further trial [4]. |

Designing an n-of-1 Trial: Core Methodology

An n-of-1 trial is a structured experiment. The following diagram and steps outline the core workflow.

1. Trial Design

- Treatments: Typically compare two treatments (A and B), which can include drugs, procedures, or devices [4].

- Treatment Periods: The unit of assignment is a pre-specified time period (e.g., one week). Its duration must be adequate for the treatment effect to manifest [4].

- Washout Periods: May be inserted between treatment periods to eliminate the carryover effect of the previous treatment [4].

2. Randomization & Blinding

- Treatment assignment is randomized and often blocked to ensure balance. Within a block of two periods, both treatments are used (randomized to order AB or BA) [4]. This design controls for period effects.

- Blinding (single or double) is highly recommended to minimize bias.

3. Trial Execution & Data Collection

- The trial consists of multiple cycles of treatment periods [4].

- Clinical outcomes are assessed repeatedly, at least once within each treatment period. The outcomes during Treatment A periods are later compared to those during Treatment B periods [4].

4. Data Analysis Analysis can range from simple to complex:

- Visual Inspection: Plotting the data to see trends [4].

- Simple Statistical Analysis: Paired t-test is commonly used [4].

- Advanced Methods: Time-series analysis to account for serial correlation, or Bayesian methods to incorporate prior evidence [4].

5. Clinical Decision Upon trial completion, the patient and clinician meet to discuss the findings and decide on the long-term treatment based on the data and the patient's preferences [4].

Experimental Protocol for an n-of-1 Trial

A detailed protocol is essential for reproducibility and rigor. Here is a template adapted from good practice guidelines for experimental protocols [5].

| Protocol Section | Key Content to Include |

|---|---|

| Title & Objective | Clearly state the condition, patient, and treatments compared. |

| Background | Rationale based on heterogeneity of treatment effects and lack of adequate evidence for this patient. |

| Participant Information | Patient eligibility/inclusion criteria, relevant medical history. |

| Interventions | Detailed description of Treatment A and B (dose, formulation, manufacturer). |

| Trial Design | Diagram of the multi-period crossover. Specify number of cycles, period duration, and washout. |

| Randomization | Method used for randomizing treatment sequence. |

| Outcomes | Primary and secondary outcome measures, tools for measurement, and schedule. |

| Data Analysis Plan | Specify statistical methods (e.g., paired t-test, visual analysis). |

| Ethics & Consent | Process for informed consent, including right to withdraw. |

Statistical Analysis Pathways

After data collection, you must choose an appropriate analytical method. The pathway below visualizes this decision process.

Key Considerations for Researchers

- Patient and Clinician Acceptance: Preliminary evidence suggests n-of-1 trials have potential for widespread acceptance as a tool to increase therapeutic precision [3].

- Beyond a Single Patient: Data from multiple n-of-1 trials can be combined using Bayesian methods to produce more stable estimates of treatment effects for both individuals and groups [3].